TPU Pipelining

Contents

TPU Pipelining#

This guide serves as a reference for TPU-specific pipelining concerns.We’ll review the memory hierarchy and compute units on TPUs, and TPU-specific features of the pipelining API. For a more general-purpose overview of pipelining, see theSoftware Pipelining.

#@title Importsimportjaxfromjax.experimentalimportpallasasplfromjax.experimental.pallasimporttpuaspltpuimportjax.numpyasjnpimportnumpyasnp

TPU and its memory spaces#

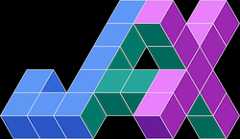

A TPU and its TensorCore consist of memory spaces (where arrays can reside),registers (which temporarily store scalar and array values) and compute units(that do computation with values in registers).Below is a diagram of a TPU in whichx andy are arrays that live inhigh-bandwidth memory (HBM):

Let’s talk about the components of this diagram in more detail:

Memory spaces: A TPU has high-bandwidth memory (HBM) which is what weoften think of as “device memory”.There is also vector memory (VMEM),a cache meant for storing vector and array values, and scalar memory (SMEM),a cache designed to store scalar values.

Registers: A TensorCore has two main types of registers: vectorregisters (VREGs) store array values, and scalar registers (SREGs) storescalar values.Values can be loaded into memory from their respective caches (VMEM forVREGs and SMEM for SREGs).

Compute units: A TensorCore has a scalar unit, vector unit (VPU) andmatrix unit (MXU) that can do numerical computation. Each of these compute units can operate asynchronously, but this is managed by the TPU compiler and thus from the programmer’s perspective a TPU program is single-threaded.Compute units operate on values that live in SREGs and VREGs and outputvalues into those registers as well.

TPU-specific Pipelining Features#

Pallas TPU supports the following platform-specific features.

TPU Memory Spaces#

Pallas exposes all levels of the TPU memory hierarchy to users. The following table maps from Pallas TPU memory spaces to their standard memory types (DRAM/SRAM):

Pallas Enum | TPU Memory Space | Type (DRAM/SRAM) |

|---|---|---|

| HBM (usually) or VMEM | DRAM |

| VMEM | SRAM |

| SMEM | SRAM |

| Semaphore | SRAM |

MemorySpace.VMEMdenotes vector SRAM. It is the default memory space if nothing is specified.MemorySpace.SMEMdenotes scalar SRAM. Only scalar loads and stores can be performed to/from SMEM.MemorySpace.ANYis a hint to the compiler that the memory space is unconstrained. In most cases, XLA will place this buffer in HBM. A buffer assigned to theANYmemory space cannot be dereferenced normally using array indexing syntax (e.g.x[...]). Instead, we must first copy the values into a VMEM or SMEM buffer usingpltpu.sync_copyorpltpu.async_copy.MemorySpace.SEMAPHOREis used to allocate semaphores for constructing barriers or tracking asynchronous operations. It is also possible to return semaphores from the kernel for building asynchronous kernels - this is an experimental feature; seePallas Async Operations for more details.

Pipelining on TPUs is typically done between HBM (DRAM) to VMEM (Vector SRAM). The default behavior forpallas_call on TPU is that arguments topallas_call are assumed to live in HBM, and inputs to the user kernel body are stored in VMEM.

While not specific to pipelining, it is possible to gain manual control over the memory space of input and output buffers, you can specify thememory_space argument on aBlockSpec. Note that pipelining is not allowed unless thememory_space is marked asVMEM. Memory spaces can also be used to specify scratch arguments to a kernel via thescratch_shapes argument onpallas_call. Scratch buffers are persistent across kernel iterations and are useful for storing intermediate results such as partial accumulations and reductions. A scratch buffer must reside inVMEM,SMEM, orSEMAPHORE.

As an example for using multiple manual memory space assignments in a kernel, the following program copies a slice of an HBM bufferx_hbm_ref into a scratch VMEM bufferscratch_vmem_ref before using it for arithmetic and storing the result into an output VMEM buffer:

defhbm_vmem_kernel(x_hbm_ref,out_vmem_ref,scratch_vmem_ref):pltpu.sync_copy(x_hbm_ref.at[0:1],scratch_vmem_ref)out_vmem_ref[...]=scratch_vmem_ref[...]+1x=jax.random.uniform(jax.random.key(0),(8,128),jnp.float32)out=pl.pallas_call(hbm_vmem_kernel,in_specs=[pl.BlockSpec(memory_space=pltpu.MemorySpace.ANY)],out_shape=jax.ShapeDtypeStruct((1,128),jnp.float32),scratch_shapes=(pltpu.MemorySpace.VMEM(shape=(1,128),dtype=jnp.float32),))(x)np.testing.assert_allclose(out,x[0:1]+1)

Multiple Buffering#

Multiple buffering can be specified on a per-argument basis to the pipeline via thepipeline_mode option onpl.BlockSpec. To do so, pass apl.Buffered object topl.BlockSpec specifying the number of buffers to allocate for this particular argument:

pl.BlockSpec(pipeline_mode=pl.Buffered(buffer_count=buffer_count))

The default buffer count is 2 for all inputs and outputs.

pltpu.emit_pipeline#

pltpu.emit_pipeline is a pipelining API implemented in Pallas that allows you to construct pipelines inside of a kernel rather than only on kernel entry. This several use-cases over usingpl.pallas_call, such as:

For constructing nested pipelines. For example, an outer pipeline that communicates between chips, and an inner pipeline that performs HBM-VMEM pipelining.

For using

emit_pipelinespecific features such as lookahead prefetch and dynamic block shapes (covered below).

pltpu.emit_pipeline follows a similar signature topl.pallas_call and requires you to specify a bodykernel, a grid, and block specs for inputs and outputs:

defemit_pipeline(kernel:Callable,grid:tuple[int],in_specs:PyTree[BlockSpec]=None,out_specs:PyTree[BlockSpec]=None,dimension_semantics:tuple[GridDimensionSemantics]=None,core_axis:int|None=None,)->Callable:...# Returns a custom pipeline given an inner kernel and BlockSpecs.

Thedimension_semantics andcore_axis arguments are used for partitioning the kernel grid over Megacore (see below).

Lookahead Prefetch#

Lookahead prefetch is a pipelining feature where the pipeline will attempt to prefetch the next input block as soon as a buffering slot is available, rather than the iteration directly before it would be used. For example, if the kernel had a grid of(8,) and the block indices to fetch on each iteration were0,0,0,0,1,1,1,1, then lookahead prefetch will begin fetching both blocks0 and1 on iteration 0, whereas the standard pipeline schedule would fetch block0 on iteration 0 but not begin fetching block1 until iteration 3. There is a small amount of control flow overhead in performing lookahead so it is disabled by default.

Lookahead is primarily useful when there is a variable amount of compute work in each block, such as when some blocks contain skipped or a reduced amount of work. In these cases, there may not be enough compute work in the iteration immediately preceding the step when the block is needed to fully overlap with the memory transfer. Therefore, we would like to begin fetching blocks earlier in the pipeline.

Lookahead prefetch can be used in conjunction with multiple buffering and can likewise be enabled by passingpl.Buffered into thepipeline_mode argument:

pl.BlockSpec(pipeline_mode=pl.Buffered(buffer_count=buffer_count,use_lookahead=True))

Dynamic Block Shapes#

pltpu.emit_pipeline supports pipelining over blocks with dynamic but bounded shapes. In order to specify such an block shape, the dynamic-sized dimension in the block should be marked withpl.BoundedSlice(max_size) rather than a static integer size, wheremax_size is the maximum size of the block. In addition, the corresponding index returned byindex_map should be a dynamic slice constructed viapl.ds(start,size) where bothstart andsize areelement indices (not block indices) and can be dynamic.

The following is an example for a block spec with a dynamic first dimension:

pl.BlockSpec(block_shape=(pl.BoundedSlice(32),256),index_map=lambda*grid_idxs:(pl.ds(start,end),0),)

# The following kernel copies `x` to the output in dynamic-sized chunks# passed in via `slices`.defdynamic_block_example_kernel(x_hbm,slices_hbm,o_hbm,slices_smem):pltpu.sync_copy(slices_hbm,slices_smem)# Copy slices into SMEM.defpipeline_body(x_vmem,o_vmem):o_vmem[...]=x_vmem[...]defindex_map(i):start=slices_smem[i,0]size=slices_smem[i,1]-slices_smem[i,0]return(pl.ds(start,size),0)block_spec=pl.BlockSpec(block_shape=(pl.BoundedSlice(8),128),index_map=index_map)pltpu.emit_pipeline(pipeline_body,grid=(slices.shape[0],),in_specs=[block_spec],out_specs=block_spec)(x_hbm,o_hbm)x=jax.random.uniform(jax.random.key(0),(8,128),jnp.float32)slices=jnp.array([[0,2],[2,3],[3,5],[5,8]],dtype=jnp.int32)hbm_block_spec=pl.BlockSpec(memory_space=pltpu.MemorySpace.ANY)out=pl.pallas_call(dynamic_block_example_kernel,in_specs=[hbm_block_spec,hbm_block_spec],out_specs=hbm_block_spec,out_shape=jax.ShapeDtypeStruct((8,128),jnp.float32),scratch_shapes=(pltpu.MemorySpace.SMEM(slices.shape,jnp.int32),))(x,slices)np.testing.assert_allclose(x,out)

TPUs in Megacore configuration#

Some TPU chips have two TensorCores but appear as one device to JAX users.This is called “megacore”.The separate TensorCores have their own separate VMEM, VREGs, SMEM, SREGsand compute units butshare HBM.

Conceptually, TPUs in Megacore behave like very simple GPUs, i.e. they haveonly two threads.How do we modify our kernels to utilize both TensorCores simultaneously?

The basic idea is that if we have embarrassingly parallel dimensions in ourcomputation, we can split up those dimensions across the TensorCores.We can indicate which dimensions are parallelizable by providing anannotation topallas_call calleddimension_semantics.

defadd_matrices_kernel(x_vmem_ref,y_vmem_ref,z_vmem_ref):# Load x and y from VMEM into VREGsx_vregs=x_vmem_ref[:,:]y_vregs=y_vmem_ref[:,:]# Execute a vectorized addz_vregs=x_vregs+y_vregs# Store the output values in VREGs back into VMEMz_vmem_ref[:,:]=z_vregsdefadd_matrices_pipelined_megacore(x:jax.Array,y:jax.Array)->jax.Array:block_spec=pl.BlockSpec((256,512),lambdai:(i,0))returnpl.pallas_call(add_matrices_kernel,out_shape=jax.ShapeDtypeStruct(x.shape,x.dtype),in_specs=[block_spec,block_spec],out_specs=block_spec,grid=(2,),compiler_params=pltpu.CompilerParams(dimension_semantics=("parallel",)))(x,y)x,y=jnp.ones((512,512)),jnp.ones((512,512))add_matrices_pipelined_megacore(x,y)

Array([[2., 2., 2., ..., 2., 2., 2.], [2., 2., 2., ..., 2., 2., 2.], [2., 2., 2., ..., 2., 2., 2.], ..., [2., 2., 2., ..., 2., 2., 2.], [2., 2., 2., ..., 2., 2., 2.], [2., 2., 2., ..., 2., 2., 2.]], dtype=float32)

dimension_semantics should be a tuple of same length asgrid where eachentry is either"parallel" or"arbitrary"."parallel" indicates to Pallas that the iterations of the for loop corresponding to that dimension can be executed independently without affecting the correctness of the program."arbitrary" indicates to Pallas that there can be no assumptions made about this grid dimension and it therefore cannot be parallelized.

By specifyingdimension_semantics, we now execute the kernelsimultaneously on each TensorCore. Pallas will handle splitting up the gridautomatically.

Note that Megacore is only currently available on TPU

v4and TPUv5p. Supplyingdimension_semanticsannotations is a no-op on other platforms, butnot specifying it will result in only one TensorCore being used (even if there are more than one available).

When usingpltpu.emit_pipeline,core_axis should be passed intoemit_pipeline.core_axis should be the index of a parallel grid axis to partition the grid on. For example, the following template can be used to partition the kernel over a leading parallel grid dimension:

defkernel_body(...):definner_pipeline_body(...):...pltpu.emit_pipeline(inner_pipeline_body,grid=(4,4),core_axis=0,dimension_semantics=("parallel","sequential"))pl.pallas_call(kernel_body,grid=(num_cores,),compiler_params=pltpu.CompilerParams(dimension_semantics=("parallel",)))