- Notifications

You must be signed in to change notification settings - Fork528

License

Unknown, Unknown licenses found

Licenses found

deep-floyd/IF

Folders and files

| Name | Name | Last commit message | Last commit date | |

|---|---|---|---|---|

Repository files navigation

IF byDeepFloyd Lab atStabilityAI

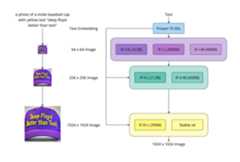

We introduce DeepFloyd IF, a novel state-of-the-art open-source text-to-image model with a high degree of photorealism and language understanding. DeepFloyd IF is a modular composed of a frozen text encoder and three cascaded pixel diffusion modules: a base model that generates 64x64 px image based on text prompt and two super-resolution models, each designed to generate images of increasing resolution: 256x256 px and 1024x1024 px. All stages of the model utilize a frozen text encoder based on the T5 transformer to extract text embeddings, which are then fed into a UNet architecture enhanced with cross-attention and attention pooling. The result is a highly efficient model that outperforms current state-of-the-art models, achieving a zero-shot FID score of 6.66 on the COCO dataset. Our work underscores the potential of larger UNet architectures in the first stage of cascaded diffusion models and depicts a promising future for text-to-image synthesis.

Inspired byPhotorealistic Text-to-Image Diffusion Models with Deep Language Understanding

- 16GB vRAM for IF-I-XL (4.3B text to 64x64 base module) & IF-II-L (1.2B to 256x256 upscaler module)

- 24GB vRAM for IF-I-XL (4.3B text to 64x64 base module) & IF-II-L (1.2B to 256x256 upscaler module) & Stable x4 (to 1024x1024 upscaler)

xformersand set env variableFORCE_MEM_EFFICIENT_ATTN=1

pip install deepfloyd_if==1.0.2rc0pip install xformers==0.0.16pip install git+https://github.com/openai/CLIP.git --no-deps

The Dream, Style Transfer, Super Resolution or Inpainting modes are avaliable in a Jupyter Notebookhere.

IF is also integrated with the 🤗 Hugging FaceDiffusers library.

Diffusers runs each stage individually allowing the user to customize the image generation process as well as allowing to inspect intermediate results easily.

Before you can use IF, you need to accept its usage conditions. To do so:

- Make sure to have aHugging Face account and be loggin in

- Accept the license on the model card ofDeepFloyd/IF-I-XL-v1.0

- Make sure to login locally. Install

huggingface_hub

pip install huggingface_hub --upgrade

run the login function in a Python shell

fromhuggingface_hubimportloginlogin()

and enter yourHugging Face Hub access token.

Next we installdiffusers and dependencies:

pip install diffusers accelerate transformers safetensors

And we can now run the model locally.

By defaultdiffusers makes use ofmodel cpu offloading to run the whole IF pipeline with as little as 14 GB of VRAM.

If you are usingtorch>=2.0.0, make sure todelete allenable_xformers_memory_efficient_attention()functions.

fromdiffusersimportDiffusionPipelinefromdiffusers.utilsimportpt_to_pilimporttorch# stage 1stage_1=DiffusionPipeline.from_pretrained("DeepFloyd/IF-I-XL-v1.0",variant="fp16",torch_dtype=torch.float16)stage_1.enable_xformers_memory_efficient_attention()# remove line if torch.__version__ >= 2.0.0stage_1.enable_model_cpu_offload()# stage 2stage_2=DiffusionPipeline.from_pretrained("DeepFloyd/IF-II-L-v1.0",text_encoder=None,variant="fp16",torch_dtype=torch.float16)stage_2.enable_xformers_memory_efficient_attention()# remove line if torch.__version__ >= 2.0.0stage_2.enable_model_cpu_offload()# stage 3safety_modules= {"feature_extractor":stage_1.feature_extractor,"safety_checker":stage_1.safety_checker,"watermarker":stage_1.watermarker}stage_3=DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-x4-upscaler",**safety_modules,torch_dtype=torch.float16)stage_3.enable_xformers_memory_efficient_attention()# remove line if torch.__version__ >= 2.0.0stage_3.enable_model_cpu_offload()prompt='a photo of a kangaroo wearing an orange hoodie and blue sunglasses standing in front of the eiffel tower holding a sign that says "very deep learning"'# text embedsprompt_embeds,negative_embeds=stage_1.encode_prompt(prompt)generator=torch.manual_seed(0)# stage 1image=stage_1(prompt_embeds=prompt_embeds,negative_prompt_embeds=negative_embeds,generator=generator,output_type="pt").imagespt_to_pil(image)[0].save("./if_stage_I.png")# stage 2image=stage_2(image=image,prompt_embeds=prompt_embeds,negative_prompt_embeds=negative_embeds,generator=generator,output_type="pt").imagespt_to_pil(image)[0].save("./if_stage_II.png")# stage 3image=stage_3(prompt=prompt,image=image,generator=generator,noise_level=100).imagesimage[0].save("./if_stage_III.png")

There are multiple ways to speed up the inference time and lower the memory consumption even more withdiffusers. To do so, please have a look at the Diffusers docs:

For more in-detail information about how to use IF, please have a look atthe IF blog post andthe documentation 📖.

Diffusers dreambooth scripts also supports fine-tuning 🎨IF.With parameter efficient finetuning, you can add new concepts to IF with a single GPU and ~28 GB VRAM.

fromdeepfloyd_if.modulesimportIFStageI,IFStageII,StableStageIIIfromdeepfloyd_if.modules.t5importT5Embedderdevice='cuda:0'if_I=IFStageI('IF-I-XL-v1.0',device=device)if_II=IFStageII('IF-II-L-v1.0',device=device)if_III=StableStageIII('stable-diffusion-x4-upscaler',device=device)t5=T5Embedder(device="cpu")

Dream is the text-to-image mode of the IF model

fromdeepfloyd_if.pipelinesimportdreamprompt='ultra close-up color photo portrait of rainbow owl with deer horns in the woods'count=4result=dream(t5=t5,if_I=if_I,if_II=if_II,if_III=if_III,prompt=[prompt]*count,seed=42,if_I_kwargs={"guidance_scale":7.0,"sample_timestep_respacing":"smart100", },if_II_kwargs={"guidance_scale":4.0,"sample_timestep_respacing":"smart50", },if_III_kwargs={"guidance_scale":9.0,"noise_level":20,"sample_timestep_respacing":"75", },)if_III.show(result['III'],size=14)

In Style Transfer mode, the output of your prompt comes out at the style of thesupport_pil_img

fromdeepfloyd_if.pipelinesimportstyle_transferresult=style_transfer(t5=t5,if_I=if_I,if_II=if_II,support_pil_img=raw_pil_image,style_prompt=['in style of professional origami','in style of oil art, Tate modern','in style of plastic building bricks','in style of classic anime from 1990', ],seed=42,if_I_kwargs={"guidance_scale":10.0,"sample_timestep_respacing":"10,10,10,10,10,10,10,10,0,0",'support_noise_less_qsample_steps':5, },if_II_kwargs={"guidance_scale":4.0,"sample_timestep_respacing":'smart50',"support_noise_less_qsample_steps":5, },)if_I.show(result['II'],1,20)

For super-resolution, users can runIF-II andIF-III or 'Stable x4' on an image that was not necessarely generated by IF (two cascades):

fromdeepfloyd_if.pipelinesimportsuper_resolutionmiddle_res=super_resolution(t5,if_III=if_II,prompt=['woman with a blue headscarf and a blue sweaterp, detailed picture, 4k dslr, best quality'],support_pil_img=raw_pil_image,img_scale=4.,img_size=64,if_III_kwargs={'sample_timestep_respacing':'smart100','aug_level':0.5,'guidance_scale':6.0, },)high_res=super_resolution(t5,if_III=if_III,prompt=[''],support_pil_img=middle_res['III'][0],img_scale=4.,img_size=256,if_III_kwargs={"guidance_scale":9.0,"noise_level":20,"sample_timestep_respacing":"75", },)show_superres(raw_pil_image,high_res['III'][0])

fromdeepfloyd_if.pipelinesimportinpaintingresult=inpainting(t5=t5,if_I=if_I,if_II=if_II,if_III=if_III,support_pil_img=raw_pil_image,inpainting_mask=inpainting_mask,prompt=['oil art, a man in a hat', ],seed=42,if_I_kwargs={"guidance_scale":7.0,"sample_timestep_respacing":"10,10,10,10,10,0,0,0,0,0",'support_noise_less_qsample_steps':0, },if_II_kwargs={"guidance_scale":4.0,'aug_level':0.0,"sample_timestep_respacing":'100', },if_III_kwargs={"guidance_scale":9.0,"noise_level":20,"sample_timestep_respacing":"75", },)if_I.show(result['I'],2,3)if_I.show(result['II'],2,6)if_I.show(result['III'],2,14)

The link to download the weights as well as the model cards will be available soon on each model of the model zoo

| Name | Cascade | Params | FID | Batch size | Steps |

|---|---|---|---|---|---|

| IF-I-M | I | 400M | 8.86 | 3072 | 2.5M |

| IF-I-L | I | 900M | 8.06 | 3200 | 3.0M |

| IF-I-XL* | I | 4.3B | 6.66 | 3072 | 2.42M |

| IF-II-M | II | 450M | - | 1536 | 2.5M |

| IF-II-L* | II | 1.2B | - | 1536 | 2.5M |

| IF-III-L*(soon) | III | 700M | - | 3072 | 1.25M |

*best modules

FID = 6.66

The code in this repository is released under the bespoke license (see addedpoint two).

The weights will be available soon viathe DeepFloyd organization at Hugging Face and have their own LICENSE.

Disclaimer:The initial release of the IF model is under a restricted research-purposes-only license temporarily to gather feedback, and after that we intend to release a fully open-source model in line with other Stability AI models.

The models available in this codebase have known limitations and biases. Please refer tothe model card for more information.

- Alex ShonenkovGitHub |Linktr

- Misha KonstantinovGitHub |Twitter

- Daria BakshandaevaGitHub |Twitter

- Christoph SchuhmannGitHub |Twitter

- Ksenia IvanovaGitHub |Twitter

- Nadiia KlokovaGitHub |Twitter

Special thanks toStabilityAI and its CEOEmad Mostaque for invaluable support, providing GPU compute and infrastructure to train the models (our gratitude goes toRichard Vencu); thanks toLAION andChristoph Schuhmann in particular for contribution to the project and well-prepared datasets; thanks toHuggingface teams for optimizing models' speed and memory consumption during inference, creating demos and giving cool advice!

- The Biggest Thanks@Apolinário, for ideas, consultations, help and support on all stages to make IF available in open-source; for writing a lot of documentation and instructions; for creating a friendly atmosphere in difficult moments 🦉;

- Thanks,@patrickvonplaten, for improving loading time of unet models by 80%;for integration Stable-Diffusion-x4 as native pipeline 💪;

- Thanks,@williamberman and@patrickvonplaten for diffusers integration 🙌;

- Thanks,@hysts and@Apolinário for creatingthe best gradio demo with IF 🚀;

- Thanks,@Dango233, for adapting IF with xformers memory efficient attention 💪;

About

Resources

License

Unknown, Unknown licenses found

Licenses found

Uh oh!

There was an error while loading.Please reload this page.

Stars

Watchers

Forks

Packages0

Uh oh!

There was an error while loading.Please reload this page.