- Notifications

You must be signed in to change notification settings - Fork2k

Classification

In a classification problem, we would typically have some input vectorsx and some desired output labelsy. Let's consider then a simple classification problem called the yin-yang problem. In this problem, we have two classes of elements. Elements belonging to the positive class, shown in blue; and elements belonging to the negative class, shown in red.

Thisdata can be downloaded in Excel format here. In order to load this data into an application, let's use the ExcelReader class together with some extensions methods from the Accord.Math namespace. Add the followingusing namespace clauses on top of your source file:

usingAccord.Controls;usingAccord.IO;usingAccord.Math;usingAccord.Statistics.Distributions.Univariate;usingAccord.MachineLearning.Bayes;

Then, let's write the following code:

// Read the Excel worksheet into a DataTableDataTabletable=newExcelReader("examples.xls").GetWorksheet("Classification - Yin Yang");// Convert the DataTable to input and output vectorsdouble[][]inputs=table.ToJagged<double>("X","Y");int[]outputs=table.Columns["G"].ToArray<int>();// Plot the dataScatterplotBox.Show("Yin-Yang",inputs,outputs).Hold();

After we run and execute this code, we will get the following scatter plot shown on the screen:

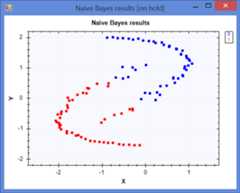

Naive Bayes classifiers are simple probabilistic classifiers based on Bayes' theorem with strong independence assumptions between the features.

// In our problem, we have 2 classes (samples can be either// positive or negative), and 2 inputs (x and y coordinates).// Create a Naive Bayes learning algorithmvarteacher=newNaiveBayesLearning<NormalDistribution>();// Use the learning algorithm to learnvarnb=teacher.Learn(inputs,outputs);// At this point, the learning algorithm should have// figured important details about the problem itself:intnumberOfClasses=nb.NumberOfClasses;// should be 2 (positive or negative)intnunmberOfInputs=nb.NumberOfInputs;// should be 2 (x and y coordinates)// Classify the samples using the modelint[]answers=nb.Decide(inputs);// Plot the resultsScatterplotBox.Show("Expected results",inputs,outputs);ScatterplotBox.Show("Naive Bayes results",inputs,answers).Hold();

SVMs are supervised learning models with associated learning algorithms that analyze data and recognize patterns, used for classification and regression analysis.

In the Linear SVM the idea is to design a hyperplane that classifies the training vectors in two classes.

// Create a L2-regularized L2-loss optimization algorithm for// the dual form of the learning problem. This is *exactly* the// same method used by LIBLINEAR when specifying -s 1 in the// command line (i.e. L2R_L2LOSS_SVC_DUAL).//varteacher=newLinearCoordinateDescent();// Teach the vector machinevarsvm=teacher.Learn(inputs,outputs);// Classify the samples using the modelbool[]answers=svm.Decide(inputs);// Convert to Int32 so we can plot:int[]zeroOneAnswers=answers.ToZeroOne();// Plot the resultsScatterplotBox.Show("Expected results",inputs,outputs);ScatterplotBox.Show("LinearSVM results",inputs,zeroOneAnswers);// Grab the index of multipliers higher than 0int[]idx=teacher.Lagrange.Find(x=>x>0);// Select the input vectors for thosedouble[][]sv=inputs.Get(idx);// Plot the support vectors selected by the machineScatterplotBox.Show("Support vectors",sv).Hold();

Kernel methods enable them to operate in high-dimensional, implicit feature space without ever computing the coordinates of the data in that space.

// Create a new Sequential Minimal Optimization (SMO) learning// algorithm and estimate the complexity parameter C from datavarteacher=newSequentialMinimalOptimization<Gaussian>(){UseComplexityHeuristic=true,UseKernelEstimation=true// estimate the kernel from the data};// Teach the vector machinevarsvm=teacher.Learn(inputs,outputs);// Classify the samples using the modelbool[]answers=svm.Decide(inputs);// Convert to Int32 so we can plot:int[]zeroOneAnswers=answers.ToZeroOne();// Plot the resultsScatterplotBox.Show("Expected results",inputs,outputs);ScatterplotBox.Show("GaussianSVM results",inputs,zeroOneAnswers);

// Grab the index of multipliers higher than 0int[]idx=teacher.Lagrange.Find(x=>x>0);// Select the input vectors for thosedouble[][]sv=inputs.Get(idx);// Plot the support vectors selected by the machineScatterplotBox.Show("Support vectors",sv).Hold();

The standard SVMs are only binary classifiers, meaning they can only classify between two types of classes: 1 or 0, true or false, etc. However, they can be extended to work with multi-class or multi-label classification problems using special constructions. In the framework, those constructions are theOneVsOne andOneVsRest strategies for multiple classes classification, respectively. They can be used to learn multi-class SVMs through theMulticlassSupportVectorLearning orMultilabelSupportVectorLearning classes.

// The following is simple auto association function where each input correspond// to its own class. This problem should be easily solved by a Linear kernel.// Sample input datadouble[][]inputs={newdouble[]{0},newdouble[]{3},newdouble[]{1},newdouble[]{2},};// Outputs for each of the inputsint[]outputs={0,3,1,2,};// Create the Multi-label learning algorithm for the machinevarteacher=newMulticlassSupportVectorLearning<Linear>(){Learner=(p)=>newSequentialMinimalOptimization<Linear>(){Complexity=10000.0// Create a hard SVM}};// Learn a multi-label SVM using the teachervarsvm=teacher.Learn(inputs,outputs);// Compute the machine answers for the inputsint[]answers=svm.Decide(inputs);

This is exactly the same example as above, but rather than having only one output class associated with each input vector, we can have as many classes as we want.

// The following is simple auto association function where each input correspond// to its own class. This problem should be easily solved by a Linear kernel.// Sample input datadouble[][]inputs={newdouble[]{0},newdouble[]{3},newdouble[]{1},newdouble[]{2},};// Outputs for each of the inputsint[][]outputs={new[]{-1,1,-1},new[]{-1,-1,1},new[]{1,1,-1},new[]{-1,-1,-1},};// Create the Multi-label learning algorithm for the machinevarteacher=newMultilabelSupportVectorLearning<Linear>(){Learner=(p)=>newSequentialMinimalOptimization<Linear>(){Complexity=10000.0// Create a hard SVM}};// Learn a multi-label SVM using the teachervarsvm=teacher.Learn(inputs,outputs);// Compute the machine answers for the inputsbool[][]answers=svm.Decide(inputs);// Use the machine as if it were a multi-class machine// instead of a multi-label, identifying the strongest// class among the multi-label predictions:int[]maxAnswers=svm.ToMulticlass().Decide(inputs);

The goal is to create a model that predicts the value of a target variable by learning simple decision rules inferred from the data features.

// In our problem, we have 2 classes (samples can be either// positive or negative), and 2 continuous-valued inputs.C45Learningteacher=newC45Learning(new[]{DecisionVariable.Continuous("X"),DecisionVariable.Continuous("Y")});// Use the learning algorithm to induce the treeDecisionTreetree=teacher.Learn(inputs,outputs);// Classify the samples using the modelint[]answers=tree.Decide(inputs);// Plot the resultsScatterplotBox.Show("Expected results",inputs,outputs);ScatterplotBox.Show("Decision Tree results",inputs,answers).Hold();

The word network in the term 'artificial neural network' refers to the inter–connectionsbetween the neurons in the different layers of each system.

// Since we would like to learn binary outputs in the form// [-1,+1], we can use a bipolar sigmoid activation functionIActivationFunctionfunction=newBipolarSigmoidFunction();// In our problem, we have 2 inputs (x, y pairs), and we will// be creating a network with 5 hidden neurons and 1 output://varnetwork=newActivationNetwork(function,inputsCount:2,neuronsCount:new[]{5,1});// Create a Levenberg-Marquardt algorithmvarteacher=newLevenbergMarquardtLearning(network){UseRegularization=true};// Because the network is expecting multiple outputs,// we have to convert our single variable into arrays//vary=outputs.ToDouble().ToArray();// Iterate until stop criteria is metdoubleerror=double.PositiveInfinity;doubleprevious;do{previous=error;// Compute one learning iterationerror=teacher.RunEpoch(inputs,y);}while(Math.Abs(previous-error)<1e-10*previous);// Classify the samples using the modelint[]answers=inputs.Apply(network.Compute).GetColumn(0).Apply(System.Math.Sign);// Plot the resultsScatterplotBox.Show("Expected results",inputs,outputs);ScatterplotBox.Show("Network results",inputs,answers).Hold();

Logistic regression measures the relationship between the categorical dependent variableand one or more independent variables by estimating probabilities using a logistic function.

// Create iterative re-weighted least squares for logistic regressionsvarteacher=newIterativeReweightedLeastSquares<LogisticRegression>(){MaxIterations=100,Regularization=1e-6};// Use the teacher algorithm to learn the regression:LogisticRegressionlr=teacher.Learn(inputs,outputs);// Classify the samples using the modelbool[]answers=lr.Decide(inputs);// Convert to Int32 so we can plot:int[]zeroOneAnswers=answers.ToZeroOne();// Plot the resultsScatterplotBox.Show("Expected results",inputs,outputs);ScatterplotBox.Show("Logistic Regression results",inputs,zeroOneAnswers).Hold();

In some problems, samples can belong to more than one single class at a time. Those problems are denotedmultiple label classification problems and can be solved in different manners. One way to attack a multi-label problem is by using a 1-vs-all support vector machine.

// The following is simple auto association function where each input correspond// to its own class. This problem should be easily solved by a Linear kernel.// Sample input datadouble[][]inputs={newdouble[]{0},newdouble[]{3},newdouble[]{1},newdouble[]{2},};// Outputs for each of the inputsint[][]outputs={new[]{-1,1,-1},new[]{-1,-1,1},new[]{1,1,-1},new[]{-1,-1,-1},};// Create the Multi-label learning algorithm for the machinevarteacher=newMultilabelSupportVectorLearning<Linear>(){Learner=(p)=>newSequentialMinimalOptimization<Linear>(){Complexity=10000.0// Create a hard SVM}};// Learn a multi-label SVM using the teachervarsvm=teacher.Learn(inputs,outputs);// Compute the machine answers for the inputsbool[][]answers=svm.Decide(inputs);// Use the machine as if it were a multi-class machine// instead of a multi-label, identifying the strongest// class among the multi-label predictions:int[]maxAnswers=svm.ToMulticlass().Decide(inputs);

SeeMulti-label SVM.

A sequence classification problem is a classification problem where input vectors can have varying length. Those problems can be attacked in multiple ways. One of them is to use a classifier that has been specifically designed to work with sequences. The other one is to extract a fixed number of features from those varying length vectors, and then use them with any standard classification algorithms, such as support vector machines.

For an example on how to transform sequences into fixed length vectors, seeDynamic Time Warp Support Vector Machine.

// Declare some training dataint[][]inputs=newint[][]{newint[]{0,1,1,0},// Class 0newint[]{0,0,1,0},// Class 0newint[]{0,1,1,1,0},// Class 0newint[]{0,1,0},// Class 0newint[]{1,0,0,1},// Class 1newint[]{1,1,0,1},// Class 1newint[]{1,0,0,0,1},// Class 1newint[]{1,0,1},// Class 1};int[]outputs=newint[]{0,0,0,0,// First four sequences are of class 01,1,1,1,// Last four sequences are of class 1};// We are trying to predict two different classesintclasses=2;// Each sequence may have up to two symbols (0 or 1)intsymbols=2;// Nested models will have two states eachint[]states=newint[]{2,2};// Creates a new Hidden Markov Model Classifier with the given parametersHiddenMarkovClassifierclassifier=newHiddenMarkovClassifier(classes,states,symbols);// Create a new learning algorithm to train the sequence classifiervarteacher=newHiddenMarkovClassifierLearning(classifier,// Train each model until the log-likelihood changes less than 0.001 modelIndex=>newBaumWelchLearning(classifier.Models[modelIndex]){Tolerance=0.001,MaxIterations=0});// Train the sequence classifier using the algorithmteacher.Learn(inputs,outputs);// Compute the classifier answers for the given inputsint[]answers=classifier.Decide(inputs);

For examples of sequence classifiers, seeHidden Markov Classifier Learning andHidden Conditional Random Field Learning.

// Suppose we would like to learn how to classify the// following set of sequences among three class labels:int[][]inputs={// First class of sequences: starts and// ends with zeros, ones in the middle:new[]{0,1,1,1,0},new[]{0,0,1,1,0,0},new[]{0,1,1,1,1,0},// Second class of sequences: starts with// twos and switches to ones until the end.new[]{2,2,2,2,1,1,1,1,1},new[]{2,2,1,2,1,1,1,1,1},new[]{2,2,2,2,2,1,1,1,1},// Third class of sequences: can start// with any symbols, but ends with three.new[]{0,0,1,1,3,3,3,3},new[]{0,0,0,3,3,3,3},new[]{1,0,1,2,2,2,3,3},new[]{1,1,2,3,3,3,3},new[]{0,0,1,1,3,3,3,3},new[]{2,2,0,3,3,3,3},new[]{1,0,1,2,3,3,3,3},new[]{1,1,2,3,3,3,3},};// Now consider their respective class labelsint[]outputs={/* Sequences 1-3 are from class 0: */0,0,0,/* Sequences 4-6 are from class 1: */1,1,1,/* Sequences 7-14 are from class 2: */2,2,2,2,2,2,2,2};// Create the Hidden Conditional Random Field using a set of discrete featuresvarfunction=newMarkovDiscreteFunction(states:3,symbols:4,outputClasses:3);varclassifier=newHiddenConditionalRandomField<int>(function);// Create a learning algorithmvarteacher=newHiddenResilientGradientLearning<int>(classifier){MaxIterations=50};// Run the algorithm and learn the modelsteacher.Learn(inputs,outputs);// Compute the classifier answers for the given inputsint[]answers=classifier.Decide(inputs);

Help improve this wiki! Those pages can be edited by anyone that would like to contribute examples and documentation to the framework.

Have you found this software useful? Consider donating onlyU$10 so it can get even better! This software is completely free and willalways stay free. Enjoy!