Preface

Working with both Object-Oriented software and Relational Databases can be cumbersome and time-consuming.Development costs are significantly higher due to a paradigm mismatch between how data is represented in objects versus relational databases.Hibernate is an Object/Relational Mapping solution for Java environments.The termObject/Relational Mapping refers to the technique of mapping data from an object model representation to a relational data model representation (and vice versa).

Hibernate not only takes care of the mapping from Java classes to database tables (and from Java data types to SQL data types), but also provides data query and retrieval facilities.It can significantly reduce development time otherwise spent with manual data handling in SQL and JDBC.Hibernate’s design goal is to relieve the developer from 95% of common data persistence-related programming tasks by eliminating the need for manual, hand-crafted data processing using SQL and JDBC.However, unlike many other persistence solutions, Hibernate does not hide the power of SQL from you and guarantees that your investment in relational technology and knowledge is as valid as always.

Hibernate may not be the best solution for data-centric applications that only use stored-procedures to implement the business logic in the database, it is most useful with object-oriented domain models and business logic in the Java-based middle-tier.However, Hibernate can certainly help you to remove or encapsulate vendor-specific SQL code and will help with the common task of result set translation from a tabular representation to a graph of objects.

Getting Started

While a strong background in SQL is not required to use Hibernate, a basic understanding of its concepts is useful - especially the principles ofdata modeling.Understanding the basics of transactions and design patterns such asUnit of Work are important as well.

New users may want to first look at the tutorial-styleQuick Start guide. This User Guide is really more of a reference guide.For a more high-level discussion of the most used features of Hibernate, see theIntroduction to Hibernate guide. There is also a series oftopical guides providing deep dives into various topics such as logging, compatibility and support, etc. |

Get Involved

Use Hibernate and report any bugs or issues you find. SeeIssue Tracker for details.

Try your hand at fixing some bugs or implementing enhancements. Again, seeIssue Tracker.

Engage with the community using the methods listed in theCommunity section.

Help improve this documentation. Contact us on the developer mailing list or Zulip if you have interest.

Spread the word. Let the rest of your organization know about the benefits of Hibernate.

1. Compatibility

1.1. Dependencies

Hibernate 7.0.10.Final requires the following dependencies (among others):

Version | |

|---|---|

Java Runtime | 17 or 21 |

3.2 | |

JDBC (bundled with the Java Runtime) | 4.2 |

Find more information for all versions of Hibernate on ourcompatibility matrix. Thecompatibility policy may also be of interest. |

If you get Hibernate from Maven Central, it is recommended to import Hibernate Platformas part of your dependency management to keep all its artifact versions aligned.

- Gradle

dependencies{implementationplatform"org.hibernate.orm:hibernate-platform:7.0.10.Final"// use the versions from the platformimplementation"org.hibernate.orm:hibernate-core"implementation"jakarta.transaction:jakarta.transaction-api"}- Maven

<dependencyManagement> <dependencies> <dependency> <groupId>org.hibernate.orm</groupId> <artifactId>hibernate-platform</artifactId> <version>7.0.10.Final</version> <type>pom</type> <scope>import</scope> </dependency> </dependencies></dependencyManagement><!-- use the versions from the platform --><dependencies> <dependency> <groupId>org.hibernate.orm</groupId> <artifactId>hibernate-core</artifactId> </dependency> <dependency> <groupId>jakarta.transaction</groupId> <artifactId>jakarta.transaction-api</artifactId> </dependency></dependencies>1.2. Database

Hibernate 7.0.10.Final is compatible with the following database versions,provided you use the correspondingdialects:

| Dialect | Minimum Database Version |

|---|---|

AzureSQLServerDialect | 11.0 |

CockroachDialect | 23.1 |

DB2Dialect | 10.5 |

DB2iDialect | 7.1 |

DB2zDialect | 12.1 |

GenericDialect | 0.0 |

H2Dialect | 2.1.214 |

HANADialect | 2.0.50 |

HSQLDialect | 2.6.1 |

MariaDBDialect | 10.5 |

MySQLDialect | 8.0 |

OracleDialect | 19.0 |

PostgreSQLDialect | 13.0 |

PostgresPlusDialect | 13.0 |

SQLServerDialect | 11.0 |

SpannerDialect | 0.0 |

SybaseASEDialect | 16.0 |

SybaseDialect | 16.0 |

2. Architecture

2.1. Overview

Hibernate, as an ORM solution, effectively "sits between" the Java application data access layer and the Relational Database, as can be seen in the diagram above.The Java application makes use of the Hibernate APIs to load, store, query, etc. its domain data.Here we will introduce the essential Hibernate APIs.This will be a brief introduction; we will discuss these contracts in detail later.

As a Jakarta Persistence provider, Hibernate implements the Java Persistence API specifications and the association between Jakarta Persistence interfaces and Hibernate specific implementations can be visualized in the following diagram:

- SessionFactory (

org.hibernate.SessionFactory) A thread-safe (and immutable) representation of the mapping of the application domain model to a database.Acts as a factory for

org.hibernate.Sessioninstances. TheEntityManagerFactoryis the Jakarta Persistence equivalent of aSessionFactoryand basically, those two converge into the sameSessionFactoryimplementation.A

SessionFactoryis very expensive to create, so, for any given database, the application should have only one associatedSessionFactory.TheSessionFactorymaintains services that Hibernate uses across allSession(s)such as second level caches, connection pools, transaction system integrations, etc.- Session (

org.hibernate.Session) A single-threaded, short-lived object conceptually modeling a "Unit of Work" (PoEAA).In Jakarta Persistence nomenclature, the

Sessionis represented by anEntityManager.Behind the scenes, the Hibernate

Sessionwraps a JDBCjava.sql.Connectionand acts as a factory fororg.hibernate.Transactioninstances.It maintains a generally "repeatable read" persistence context (first level cache) of the application domain model.- Transaction (

org.hibernate.Transaction) A single-threaded, short-lived object used by the application to demarcate individual physical transaction boundaries.

EntityTransactionis the Jakarta Persistence equivalent and both act as an abstraction API to isolate the application from the underlying transaction system in use (JDBC or JTA).

3. Domain Model

The termdomain model comes from the realm of data modeling.It is the model that ultimately describes theproblem domain you are working in.Sometimes you will also hear the termpersistent classes.

Ultimately the application domain model is the central character in an ORM.They make up the classes you wish to map. Hibernate works best if these classes follow the Plain Old Java Object (POJO) / JavaBean programming model.However, none of these rules are hard requirements.Indeed, Hibernate assumes very little about the nature of your persistent objects. You can express a domain model in other ways (using trees ofjava.util.Map instances, for example).

Historically applications using Hibernate would have used its proprietary XML mapping file format for this purpose.With the coming of Jakarta Persistence, most of this information is now defined in a way that is portable across ORM/Jakarta Persistence providers using annotations (and/or standardized XML format).This chapter will focus on Jakarta Persistence mapping where possible.For Hibernate mapping features not supported by Jakarta Persistence we will prefer Hibernate extension annotations.

This chapter mostly uses "implicit naming" for table names, column names, etc. For details onadjusting these names seeNaming strategies. |

3.1. Mapping types

Hibernate understands both the Java and JDBC representations of application data.The ability to read/write this data from/to the database is the function of a Hibernatetype.A type, in this usage, is an implementation of theorg.hibernate.type.Type interface.This Hibernate type also describes various behavioral aspects of the Java type such as how to check for equality, how to clone values, etc.

Usage of the wordtype The Hibernate type is neither a Java type nor a SQL data type.It provides information about mapping a Java type to an SQL type as well as how to persist and fetch a given Java type to and from a relational database. When you encounter the term type in discussions of Hibernate, it may refer to the Java type, the JDBC type, or the Hibernate type, depending on the context. |

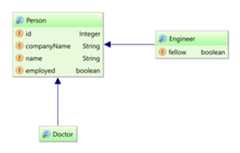

To help understand the type categorizations, let’s look at a simple table and domain model that we wish to map.

create table Contact ( id integer not null, first varchar(255), last varchar(255), middle varchar(255), notes varchar(255), starred boolean not null, website varchar(255), primary key (id))@Entity(name="Contact")publicstaticclassContact{@IdprivateIntegerid;privateNamename;privateStringnotes;privateURLwebsite;privatebooleanstarred;//Getters and setters are omitted for brevity}@EmbeddablepublicclassName{privateStringfirstName;privateStringmiddleName;privateStringlastName;// getters and setters omitted}In the broadest sense, Hibernate categorizes types into two groups:

3.1.1. Value types

A value type is a piece of data that does not define its own lifecycle.It is, in effect, owned by an entity, which defines its lifecycle.

Looked at another way, all the state of an entity is made up entirely of value types.These state fields or JavaBean properties are termedpersistent attributes.The persistent attributes of theContact class are value types.

Value types are further classified into three sub-categories:

- Basic types

in mapping the

Contacttable, all attributes except for name would be basic types. Basic types are discussed in detail inBasic types- Embeddable types

the

nameattribute is an example of an embeddable type, which is discussed in details inEmbeddable types- Collection types

although not featured in the aforementioned example, collection types are also a distinct category among value types. Collection types are further discussed inCollections

3.1.2. Entity types

Entities, by nature of their unique identifier, exist independently of other objects whereas values do not.Entities are domain model classes which correlate to rows in a database table, using a unique identifier.Because of the requirement for a unique identifier, entities exist independently and define their own lifecycle.TheContact class itself would be an example of an entity.

Mapping entities is discussed in detail inEntity types.

3.2. Basic values

A basic type is a mapping between a Java type and a single database column.

Hibernate can map many standard Java types (Integer,String, etc.) as basictypes. The mapping for many come from tables B-3 and B-4 in the JDBC specification[jdbc].Others (URL asVARCHAR, e.g.) simply make sense.

Additionally, Hibernate provides multiple, flexible ways to indicate how the Java typeshould be mapped to the database.

The Jakarta Persistence specification strictly limits the Java types that can be marked as basic to the following:

If provider portability is a concern, you should stick to just these basic types. Java Persistence 2.1 introduced the |

3.2.1. @Basic

Strictly speaking, a basic type is denoted by thejakarta.persistence.Basic annotation.

Generally, the@Basic annotation can be ignored as it is assumed by default. Both of the followingexamples are ultimately the same.

@Basic explicit@Entity(name="Product")publicclassProduct{@Id@BasicprivateIntegerid;@BasicprivateStringsku;@BasicprivateStringname;@BasicprivateStringdescription;}@Basic implied@Entity(name="Product")publicclassProduct{@IdprivateIntegerid;privateStringsku;privateStringname;privateStringdescription;}The@Basic annotation defines 2 attributes.

optional- boolean (defaults to true)Defines whether this attribute allows nulls. Jakarta Persistence definesthis as "a hint", which means the provider is free to ignore it. Jakarta Persistence also says that it will beignored if the type is primitive. As long as the type is not primitive, Hibernate will honor thisvalue. Works in conjunction with

@Column#nullable- see@Column.fetch- FetchType (defaults to EAGER)Defines whether this attribute should be fetched eagerly or lazily.

EAGERindicates that the value will be fetched as part of loading the owner.LAZYvalues arefetched only when the value is accessed. Jakarta Persistence requires providers to supportEAGER, while support forLAZYis optional meaning that a provider is free to not support it. Hibernate supports lazy loadingof basic values as long as you are using itsbytecode enhancementsupport.

3.2.2. @Column

Jakarta Persistence defines rules for implicitly determining the name of tables and columns.For a detailed discussion of implicit naming seeNaming strategies.

For basic type attributes, the implicit naming rule is that the column name is the same as the attribute name.If that implicit naming rule does not meet your requirements, you can explicitly tell Hibernate (and other providers) the column name to use.

@Entity(name="Product")publicclassProduct{@IdprivateIntegerid;privateStringsku;privateStringname;@Column(name="NOTES")privateStringdescription;}Here we use@Column to explicitly map thedescription attribute to theNOTES column, as opposed to theimplicit column namedescription. SeeNaming strategies for additional details.

The@Column annotation defines other mapping information as well. See its Javadocs for details.

3.2.3. @Formula

@Formula allows mapping any database computed value as a virtual read-only column.

|

@Formula mapping usage@Entity(name="Account")publicstaticclassAccount{@IdprivateLongid;privateDoublecredit;privateDoublerate;@Formula(value="credit * rate")privateDoubleinterest;//Getters and setters omitted for brevity}When loading theAccount entity, Hibernate is going to calculate theinterest property using the configured@Formula:

@Formula mappingdoInJPA(this::entityManagerFactory,entityManager->{Accountaccount=newAccount();account.setId(1L);account.setCredit(5000d);account.setRate(1.25/100);entityManager.persist(account);});doInJPA(this::entityManagerFactory,entityManager->{Accountaccount=entityManager.find(Account.class,1L);assertEquals(Double.valueOf(62.5d),account.getInterest());});INSERT INTO Account (credit, rate, id)VALUES (5000.0, 0.0125, 1)SELECT a.id as id1_0_0_, a.credit as credit2_0_0_, a.rate as rate3_0_0_, a.credit * a.rate as formula0_0_FROM Account aWHERE a.id = 1The SQL fragment defined by the |

3.2.4. Mapping basic values

To deal with values of basic type, Hibernate needs to understand a few things about the mapping:

The capabilities of the Java type. For example:

How to compare values

How to calculate a hash-code

How to coerce values of this type to another type

The JDBC type it should use

How to bind values to JDBC statements

How to extract from JDBC results

Any conversion it should perform on the value to/from the database

The mutability of the value - whether the internal state can change like

java.util.Dateor is immutable likejava.lang.String

This section covers how Hibernate determines these pieces and how to influence that determination process.

The following sections focus on approaches introduced in version 6 to influence how Hibernate willmap basic value to the database. This includes removal of the following deprecated legacy annotations:

See the 6.0 migration guide for discussions about migrating uses of these annotations The new annotations added as part of 6.0 support composing mappings in annotationsthrough "meta-annotations". |

Looking atthis example, how does Hibernate know what mappingto use for these attributes? The annotations do not really provide much information.

This is an illustration of Hibernate’s implicit basic-type resolution, which is a series of checks to determinethe appropriate mapping to use. Describing the complete process for implicit resolution is beyond the scopeof this documentation[2].

This is primarily driven by the Java type defined for the basic type, which can generallybe determined through reflection. Is the Java type an enum? Is it temporal? These answerscan indicate certain mappings be used.

The fallback is to map the value to the "recommended" JDBC type.

Worst case, if the Java type isSerializable Hibernate will try to handle it via binary serialization.

For cases where the Java type is not a standard type or if some specialized handling is desired, Hibernateprovides 2 main approaches to influence this mapping resolution:

A compositional approach using a combination of one-or-more annotations to describe specificaspects of the mapping. This approach is covered inCompositional basic mapping.

The

UserTypecontract, which is covered inCustom type mapping

These 2 approaches should be considered mutually exclusive. A custom UserType will alwaystake precedence over compositional annotations.

The next few sections look at common, standard Java types and discusses various ways to map them.SeeCase Study : BitSet for examples of mappingBitSet as a basic type using all of these approaches.

3.2.5. Enums

Hibernate supports the mapping of Java enums as basic value types in a number of different ways.

@Enumerated

The original Jakarta Persistence-compliant way to map enums was via the@Enumerated or@MapKeyEnumeratedannotations, working on the principle that the enum values are stored according to one of 2 strategies indicatedbyjakarta.persistence.EnumType:

ORDINALstored according to the enum value’s ordinal position within the enum class, as indicated by

java.lang.Enum#ordinalSTRINGstored according to the enum value’s name, as indicated by

java.lang.Enum#name

Assuming the following enumeration:

PhoneType enumerationpublicenumPhoneType{LAND_LINE,MOBILE;}In the ORDINAL example, thephone_type column is defined as a (nullable) INTEGER type and would hold:

NULLFor null values

0For the

LAND_LINEenum1For the

MOBILEenum

@Enumerated(ORDINAL) example@Entity(name="Phone")publicstaticclassPhone{@IdprivateLongid;@Column(name="phone_number")privateStringnumber;@Enumerated(EnumType.ORDINAL)@Column(name="phone_type")privatePhoneTypetype;//Getters and setters are omitted for brevity}When persisting this entity, Hibernate generates the following SQL statement:

@Enumerated(ORDINAL) mappingPhonephone=newPhone();phone.setId(1L);phone.setNumber("123-456-78990");phone.setType(PhoneType.MOBILE);entityManager.persist(phone);INSERT INTO Phone (phone_number, phone_type, id)VALUES ('123-456-78990', 1, 1)In the STRING example, thephone_type column is defined as a (nullable) VARCHAR type and would hold:

NULLFor null values

LAND_LINEFor the

LAND_LINEenumMOBILEFor the

MOBILEenum

@Enumerated(STRING) example@Entity(name="Phone")publicstaticclassPhone{@IdprivateLongid;@Column(name="phone_number")privateStringnumber;@Enumerated(EnumType.STRING)@Column(name="phone_type")privatePhoneTypetype;//Getters and setters are omitted for brevity}Persisting the same entity as in the@Enumerated(ORDINAL) example, Hibernate generates the following SQL statement:

@Enumerated(STRING) mappingINSERT INTO Phone (phone_number, phone_type, id)VALUES ('123-456-78990', 'MOBILE', 1)Using AttributeConverter

Let’s consider the followingGender enum which stores its values using the'M' and'F' codes.

publicenumGender{MALE('M'),FEMALE('F');privatefinalcharcode;Gender(charcode){this.code=code;}publicstaticGenderfromCode(charcode){if(code=='M'||code=='m'){returnMALE;}if(code=='F'||code=='f'){returnFEMALE;}thrownewUnsupportedOperationException("The code "+code+" is not supported!");}publicchargetCode(){returncode;}}You can map enums in a Jakarta Persistence compliant way using a Jakarta Persistence AttributeConverter.

AttributeConverter example@Entity(name="Person")publicstaticclassPerson{@IdprivateLongid;privateStringname;@Convert(converter=GenderConverter.class)publicGendergender;//Getters and setters are omitted for brevity}@ConverterpublicstaticclassGenderConverterimplementsAttributeConverter<Gender,Character>{publicCharacterconvertToDatabaseColumn(Gendervalue){if(value==null){returnnull;}returnvalue.getCode();}publicGenderconvertToEntityAttribute(Charactervalue){if(value==null){returnnull;}returnGender.fromCode(value);}}Here, the gender column is defined as a CHAR type and would hold:

NULLFor null values

'M'For the

MALEenum'F'For the

FEMALEenum

For additional details on using AttributeConverters, seeAttributeConverters section.

Jakarta Persistence explicitly disallows the use of an So, when using the |

Custom type

You can also map enums using a Hibernate custom type mapping.Let’s again revisit the Gender enum example, this time using a custom Type to store the more standardized'M' and'F' codes.

@Entity(name="Person")publicstaticclassPerson{@IdprivateLongid;privateStringname;@Type(GenderType.class)@Column(length=6)publicGendergender;//Getters and setters are omitted for brevity}publicclassGenderTypeextendsUserTypeSupport<Gender>{publicGenderType(){super(Gender.class,Types.CHAR);}}publicclassGenderJavaTypeextendsAbstractClassJavaType<Gender>{publicstaticfinalGenderJavaTypeINSTANCE=newGenderJavaType();protectedGenderJavaType(){super(Gender.class);}publicStringtoString(Gendervalue){returnvalue==null?null:value.name();}publicGenderfromString(CharSequencestring){returnstring==null?null:Gender.valueOf(string.toString());}public<X>Xunwrap(Gendervalue,Class<X>type,WrapperOptionsoptions){returnCharacterJavaType.INSTANCE.unwrap(value==null?null:value.getCode(),type,options);}public<X>Genderwrap(Xvalue,WrapperOptionsoptions){returnGender.fromCode(CharacterJavaType.INSTANCE.wrap(value,options));}}Again, the gender column is defined as a CHAR type and would hold:

NULLFor null values

'M'For the

MALEenum'F'For the

FEMALEenum

For additional details on using custom types, seeCustom type mapping section.

3.2.6. Boolean

By default,Boolean attributes map toBOOLEAN columns, at least when the database has adedicatedBOOLEAN type. On databases which don’t, Hibernate uses whatever else is available:BIT,TINYINT, orSMALLINT.

// this will be mapped to BIT or BOOLEAN on the database@Basicbooleanimplicit;However, it is quite common to find boolean values encoded as a character or as an integer.Such cases are exactly the intention ofAttributeConverter. For convenience, Hibernateprovides 3 built-in converters for the common boolean mapping cases:

YesNoConverterencodes a boolean value as'Y'or'N',TrueFalseConverterencodes a boolean value as'T'or'F', andNumericBooleanConverterencodes the value as an integer,1for true, and0for false.

AttributeConverter// this will get mapped to CHAR or NCHAR with a conversion@Basic@Convert(converter=org.hibernate.type.YesNoConverter.class)booleanconvertedYesNo;// this will get mapped to CHAR or NCHAR with a conversion@Basic@Convert(converter=org.hibernate.type.TrueFalseConverter.class)booleanconvertedTrueFalse;// this will get mapped to TINYINT with a conversion@Basic@Convert(converter=org.hibernate.type.NumericBooleanConverter.class)booleanconvertedNumeric;If the boolean value is defined in the database as something other thanBOOLEAN, character or integer,the value can also be mapped using a customAttributeConverter - seeAttributeConverters.

AUserType may also be used - seeCustom type mapping

3.2.7. Byte

By default, Hibernate maps values ofByte /byte to theTINYINT JDBC type.

// these will both be mapped using TINYINTBytewrapper;byteprimitive;SeeByte array for mapping arrays of bytes.

3.2.8. Short

By default, Hibernate maps values ofShort /short to theSMALLINT JDBC type.

// these will both be mapped using SMALLINTShortwrapper;shortprimitive;3.2.9. Integer

By default, Hibernate maps values ofInteger /int to theINTEGER JDBC type.

// these will both be mapped using INTEGERIntegerwrapper;intprimitive;3.2.10. Long

By default, Hibernate maps values ofLong /long to theBIGINT JDBC type.

// these will both be mapped using BIGINTLongwrapper;longprimitive;3.2.11. BigInteger

By default, Hibernate maps values ofBigInteger to theNUMERIC JDBC type.

// will be mapped using NUMERICBigIntegerwrapper;3.2.12. Double

By default, Hibernate maps values ofDouble to theDOUBLE,FLOAT,REAL orNUMERIC JDBC type depending on the capabilities of the database

// these will be mapped using DOUBLE, FLOAT, REAL or NUMERIC// depending on the capabilities of the databaseDoublewrapper;doubleprimitive;A specific type can be influenced using any of the JDBC type influencers covered inJdbcType section.

If@JdbcTypeCode is used, the Dialect is still consulted to make sure the databasesupports the requested type. If not, an appropriate type is selected

3.2.13. Float

By default, Hibernate maps values ofFloat to theFLOAT,REAL orNUMERIC JDBC type depending on the capabilities of the database.

// these will be mapped using FLOAT, REAL or NUMERIC// depending on the capabilities of the databaseFloatwrapper;floatprimitive;A specific type can be influenced using any of the JDBC type influencers covered inMapping basic values section.

If@JdbcTypeCode is used, the Dialect is still consulted to make sure the databasesupports the requested type. If not, an appropriate type is selected

3.2.14. BigDecimal

By default, Hibernate maps values ofBigDecimal to theNUMERIC JDBC type.

// will be mapped using NUMERICBigDecimalwrapper;3.2.15. Character

By default, Hibernate mapsCharacter to theCHAR JDBC type.

// these will be mapped using CHARCharacterwrapper;charprimitive;3.2.16. String

By default, Hibernate mapsString to theVARCHAR JDBC type.

// will be mapped using VARCHARStringstring;// will be mapped using CLOB@LobStringclobString;Optionally, you may specify the maximum length of the string using@Column(length=…),or using the@Size annotation from Hibernate Validator.For very large strings, you can use one of the constant values defined by the classorg.hibernate.Length, for example:

@Column(length=Length.LONG)privateStringtext;Alternatively, you may explicitly specify the JDBC typeLONGVARCHAR, which is treatedas aVARCHAR mapping with defaultlength=Length.LONG when nolength is explicitlyspecified:

@JdbcTypeCode(Types.LONGVARCHAR)privateStringtext;If you use Hibernate for schema generation, Hibernate will generate DDL with a column typethat is large enough to accommodate the maximum length you’ve specified.

If the maximum length you specify is too long to fit in the largest |

SeeHandling LOB data for details on mapping to a database CLOB.

For databases which support nationalized character sets, you can also store strings asnationalized data.

// will be mapped using NVARCHAR@NationalizedStringnstring;// will be mapped using NCLOB@Lob@NationalizedStringnclobString;SeeHandling nationalized character data for details on mapping strings using nationalized character sets.

3.2.17. Character arrays

By default, Hibernate mapschar[] to theVARCHAR JDBC type.SinceCharacter[] can contain null elements, it is mapped asbasic array type instead.Prior to Hibernate 6.2, alsoCharacter[] mapped toVARCHAR, yet disallowednull elements.To continue mappingCharacter[] to theVARCHAR JDBC type, or for LOBs mapping to theCLOB JDBC type,it is necessary to annotate the persistent attribute with@JavaType( CharacterArrayJavaType.class ).

// mapped as VARCHARchar[]primitive;Character[]wrapper;@JavaType(CharacterArrayJavaType.class)Character[]wrapperOld;// mapped as CLOB@Lobchar[]primitiveClob;@LobCharacter[]wrapperClob;SeeHandling LOB data for details on mapping as database LOB.

For databases which support nationalized character sets, you can also store character arrays asnationalized data.

// mapped as NVARCHAR@Nationalizedchar[]primitiveNVarchar;@NationalizedCharacter[]wrapperNVarchar;@Nationalized@JavaType(CharacterArrayJavaType.class)Character[]wrapperNVarcharOld;// mapped as NCLOB@Lob@Nationalizedchar[]primitiveNClob;@Lob@NationalizedCharacter[]wrapperNClob;SeeHandling nationalized character data for details on mapping strings using nationalized character sets.

3.2.18. Clob / NClob

Be sure to check outHandling LOB data which covers basics of LOB handling andHandling nationalized character data which covers basicsof nationalized data handling. |

By default, Hibernate will map thejava.sql.Clob Java type toCLOB andjava.sql.NClob toNCLOB.

Considering we have the following database table:

CREATETABLEProduct(idINTEGERNOTNULL,nameVARCHAR(255),warrantyCLOB,PRIMARYKEY(id))Let’s first map this using the@Lob Jakarta Persistence annotation and thejava.sql.Clob type:

CLOB mapped tojava.sql.Clob@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@LobprivateClobwarranty;//Getters and setters are omitted for brevity}To persist such an entity, you have to create aClob using theClobProxy Hibernate utility:

java.sql.Clob entityStringwarranty="My product warranty";finalProductproduct=newProduct();product.setId(1);product.setName("Mobile phone");product.setWarranty(ClobProxy.generateProxy(warranty));entityManager.persist(product);To retrieve theClob content, you need to transform the underlyingjava.io.Reader:

java.sql.Clob entityProductproduct=entityManager.find(Product.class,productId);try(Readerreader=product.getWarranty().getCharacterStream()){assertEquals("My product warranty",toString(reader));}We could also map the CLOB in a materialized form. This way, we can either use aString or achar[].

CLOB mapped toString@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@LobprivateStringwarranty;//Getters and setters are omitted for brevity}We might even want the materialized data as a char array.

char[] mapping@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@Lobprivatechar[]warranty;//Getters and setters are omitted for brevity}Just like withCLOB, Hibernate can also deal withNCLOB SQL data types:

NCLOB - SQLCREATETABLEProduct(idINTEGERNOTNULL,nameVARCHAR(255),warrantynclob,PRIMARYKEY(id))Hibernate can map theNCLOB to ajava.sql.NClob

NCLOB mapped tojava.sql.NClob@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@Lob@Nationalized// Clob also works, because NClob extends Clob.// The database type is still NCLOB either way and handled as such.privateNClobwarranty;//Getters and setters are omitted for brevity}To persist such an entity, you have to create anNClob using theNClobProxy Hibernate utility:

java.sql.NClob entityStringwarranty="My product®™ warranty 😍";finalProductproduct=newProduct();product.setId(1);product.setName("Mobile phone");product.setWarranty(NClobProxy.generateProxy(warranty));entityManager.persist(product);To retrieve theNClob content, you need to transform the underlyingjava.io.Reader:

java.sql.NClob entityProductproduct=entityManager.find(Product.class,1);NClobwarranty=product.getWarranty();assertEquals("My product®™ warranty 😍",warranty.getSubString(1,(int)warranty.length()));We could also map theNCLOB in a materialized form. This way, we can either use aString or achar[].

NCLOB mapped toString@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@Lob@NationalizedprivateStringwarranty;//Getters and setters are omitted for brevity}We might even want the materialized data as a char array.

char[] mapping@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@Lob@Nationalizedprivatechar[]warranty;//Getters and setters are omitted for brevity}3.2.19. Byte array

By default, Hibernate mapsbyte[] to theVARBINARY JDBC type.SinceByte[] can contain null elements, it is mapped asbasic array type instead.Prior to Hibernate 6.2, alsoByte[] mapped toVARBINARY, yet disallowednull elements.To continue mappingByte[] to theVARBINARY JDBC type, or for LOBs mapping to theBLOB JDBC type,it is necessary to annotate the persistent attribute with@JavaType( ByteArrayJavaType.class ).

// mapped as VARBINARYprivatebyte[]primitive;privateByte[]boxed;@JavaType(ByteArrayJavaType.class)privateByte[]wrapperOld;// mapped as (materialized) BLOB@Lobprivatebyte[]primitiveLob;@LobprivateByte[]wrapperLob;Just like with strings, you may specify the maximum length using@Column(length=…)or the@Size annotation from Hibernate Validator.For very large arrays, you can use the constants defined byorg.hibernate.Length.Alternatively@JdbcTypeCode(Types.LONGVARBINARY) is treated as aVARBINARY mappingwith defaultlength=Length.LONG when no length is explicitly specified.

If you use Hibernate for schema generation, Hibernate will generate DDL with a column typethat is large enough to accommodate the maximum length you’ve specified.

If the maximum length you specify is too long to fit in the largest |

SeeHandling LOB data for details on mapping to a database BLOB.

3.2.20. Blob

Be sure to check outHandling LOB data which covers basics of LOB handling. |

By default, Hibernate will map thejava.sql.Blob Java type toBLOB.

Considering we have the following database table:

CREATETABLEProduct(idINTEGERNOTNULL,imageblob,nameVARCHAR(255),PRIMARYKEY(id))Let’s first map this using the JDBCjava.sql.Blob type.

BLOB mapped tojava.sql.Blob@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@LobprivateBlobimage;//Getters and setters are omitted for brevity}To persist such an entity, you have to create aBlob using theBlobProxy Hibernate utility:

java.sql.Blob entitybyte[]image=newbyte[]{1,2,3};finalProductproduct=newProduct();product.setId(1);product.setName("Mobile phone");product.setImage(BlobProxy.generateProxy(image));entityManager.persist(product);To retrieve theBlob content, you need to transform the underlyingjava.io.InputStream:

java.sql.Blob entityProductproduct=entityManager.find(Product.class,productId);try(InputStreaminputStream=product.getImage().getBinaryStream()){assertArrayEquals(newbyte[]{1,2,3},toBytes(inputStream));}We could also map the BLOB in a materialized form (e.g.byte[]).

BLOB mapped tobyte[]@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@Lobprivatebyte[]image;//Getters and setters are omitted for brevity}3.2.21. Duration

By default, Hibernate mapsDuration to theNUMERIC SQL type.

It’s possible to mapDuration to theINTERVAL_SECOND SQL type using@JdbcTypeCode(INTERVAL_SECOND) or by settinghibernate.type.preferred_duration_jdbc_type=INTERVAL_SECOND |

privateDurationduration;3.2.22. Instant

Instant is mapped to theTIMESTAMP_UTC SQL type.

// mapped as TIMESTAMPprivateInstantinstant;SeeHandling temporal data for basics of temporal mapping

3.2.23. LocalDate

LocalDate is mapped to theDATE JDBC type.

// mapped as DATEprivateLocalDatelocalDate;SeeHandling temporal data for basics of temporal mapping

3.2.24. LocalDateTime

LocalDateTime is mapped to theTIMESTAMP JDBC type.

// mapped as TIMESTAMPprivateLocalDateTimelocalDateTime;SeeHandling temporal data for basics of temporal mapping

3.2.25. LocalTime

LocalTime is mapped to theTIME JDBC type.

// mapped as TIMEprivateLocalTimelocalTime;SeeHandling temporal data for basics of temporal mapping

3.2.26. OffsetDateTime

OffsetDateTime is mapped to theTIMESTAMP orTIMESTAMP_WITH_TIMEZONE JDBC typedepending on the database.

// mapped as TIMESTAMP or TIMESTAMP_WITH_TIMEZONEprivateOffsetDateTimeoffsetDateTime;SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.27. OffsetTime

OffsetTime is mapped to theTIME orTIME_WITH_TIMEZONE JDBC typedepending on the database.

// mapped as TIME or TIME_WITH_TIMEZONEprivateOffsetTimeoffsetTime;SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.28. TimeZone

TimeZone is mapped toVARCHAR JDBC type.

// mapped as VARCHARprivateTimeZonetimeZone;3.2.29. ZonedDateTime

ZonedDateTime is mapped to theTIMESTAMP orTIMESTAMP_WITH_TIMEZONE JDBC typedepending on the database.

// mapped as TIMESTAMP or TIMESTAMP_WITH_TIMEZONEprivateZonedDateTimezonedDateTime;SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.30. ZoneOffset

ZoneOffset is mapped toVARCHAR JDBC type.

// mapped as VARCHARprivateZoneOffsetzoneOffset;3.2.31. Calendar

SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.32. Date

SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.33. Time

SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.34. Timestamp

SeeHandling temporal data for basics of temporal mappingSeeUsing a specific time zone for basics of time-zone handling

3.2.35. Class

Hibernate mapsClass references toVARCHAR JDBC type

// mapped as VARCHARprivateClass<?>clazz;3.2.36. Currency

Hibernate mapsCurrency references toVARCHAR JDBC type

// mapped as VARCHARprivateCurrencycurrency;3.2.37. Locale

Hibernate mapsLocale references toVARCHAR JDBC type

// mapped as VARCHARprivateLocalelocale;3.2.38. UUID

Hibernate allows mapping UUID values in a number of ways. By default, Hibernate willstore UUID values in the native form by using the SQL typeUUID or in binary form with theBINARY JDBC typeif the database does not have a native UUID type.

The default uses the binary representation because it uses a more efficient column storage. However, many applications prefer the readability of the character-based column storage. To switch the default mapping, set the |

UUID as binary

As mentioned, the default mapping for UUID attributes.Maps the UUID to abyte[] usingjava.util.UUID#getMostSignificantBits andjava.util.UUID#getLeastSignificantBits and stores that asBINARY data.

Chosen as the default simply because it is generally more efficient from a storage perspective.

UUID as (var)char

Maps the UUID to a String usingjava.util.UUID#toString andjava.util.UUID#fromString and stores that asCHAR orVARCHAR data.

UUID as identifier

Hibernate supports using UUID values as identifiers, and they can even be generated on the user’s behalf.For details, see the discussion of generators inIdentifiers.

3.2.39. InetAddress

By default, Hibernate will mapInetAddress to theINET SQL type and fallback toBINARY if necessary.

privateInetAddressaddress;3.2.40. JSON mapping

Hibernate will only use theJSON type if explicitly configured through@JdbcTypeCode( SqlTypes.JSON ).The JSON library used for serialization/deserialization is detected automatically,but can be overridden by settinghibernate.type.json_format_mapperas can be read in theConfigurations section.

@JdbcTypeCode(SqlTypes.JSON)privateMap<String,String>stringMap;3.2.41. XML mapping

Hibernate will only use theXML type if explicitly configured through@JdbcTypeCode( SqlTypes.SQLXML ).The XML library used for serialization/deserialization is detected automatically,but can be overridden by settinghibernate.type.xml_format_mapperas can be read in theConfigurations section.

@JdbcTypeCode(SqlTypes.SQLXML)privateMap<String,StringNode>stringMap;3.2.42. Basic array mapping

Basic arrays, other thanbyte[]/Byte[] andchar[]/Character[], map to the type codeSqlTypes.ARRAY by default,which maps to the SQL standardarray type if possible,as determined via the new methodsgetArrayTypeName andsupportsStandardArrays oforg.hibernate.dialect.Dialect.If SQL standard array types are not available, data will be modeled asSqlTypes.JSON,SqlTypes.XML orSqlTypes.VARBINARY,depending on the database support as determined via the new methodorg.hibernate.dialect.Dialect.getPreferredSqlTypeCodeForArray.

Short[]wrapper;short[]primitive;3.2.43. Basic collection mapping

Basic collections (only subtypes ofCollection), which are not annotated with@ElementCollection,map to the type codeSqlTypes.ARRAY by default, which maps to the SQL standardarray type if possible,as determined via the new methodsgetArrayTypeName andsupportsStandardArrays oforg.hibernate.dialect.Dialect.If SQL standard array types are not available, data will be modeled asSqlTypes.JSON,SqlTypes.XML orSqlTypes.VARBINARY,depending on the database support as determined via the new methodorg.hibernate.dialect.Dialect.getPreferredSqlTypeCodeForArray.

List<Short>list;SortedSet<Short>sortedSet;3.2.44. Compositional basic mapping

The compositional approach allows defining how the mapping should work in terms of influencingindividual parts that make up a basic-value mapping. This section will look at these individualparts and the specifics of influencing each.

JavaType

Hibernate needs to understand certain aspects of the Java type to handle values properly and efficiently.Hibernate understands these capabilities through itsorg.hibernate.type.descriptor.java.JavaType contract.Hibernate provides built-in support for many JDK types (Integer,String, e.g.), but also supports the abilityfor the application to change the handling for any of the standardJavaType registrations as well asadd in handling for non-standard types. Hibernate provides multiple ways for the application to influencetheJavaType descriptor to use.

The resolution can be influenced locally using the@JavaType annotation on a particular mapping. Theindicated descriptor will be used just for that mapping. There are also forms of@JavaType for influencingthe keys of a Map (@MapKeyJavaType), the index of a List or array (@ListIndexJavaType), the identifierof an ID-BAG mapping (@CollectionIdJavaType) as well as the discriminator (@AnyDiscriminator) andkey (@AnyKeyJavaClass,@AnyKeyJavaType) of an ANY mapping.

The resolution can also be influenced globally by registering the appropriateJavaType descriptor with theJavaTypeRegistry. This approach is able to both "override" the handling for certain Java types orto register new types. SeeRegistries for discussion ofJavaTypeRegistry.

SeeResolving the composition for a discussion of the process used to resolve themapping composition.

JdbcType

Hibernate also needs to understand aspects of the JDBC type it should use (how it should bind values,how it should extract values, etc.) which is the role of itsorg.hibernate.type.descriptor.jdbc.JdbcTypecontract. Hibernate provides multiple ways for the application to influence theJdbcType descriptor to use.

Locally, the resolution can be influenced using either the@JdbcType or@JdbcTypeCode annotations. Thereare also annotations for influencing theJdbcType in relation to Map keys (@MapKeyJdbcType,@MapKeyJdbcTypeCode),the index of a List or array (@ListIndexJdbcType,@ListIndexJdbcTypeCode), the identifier of an ID-BAG mapping(@CollectionIdJdbcType,@CollectionIdJdbcTypeCode) as well as the key of an ANY mapping (@AnyKeyJdbcType,@AnyKeyJdbcTypeCode). The@JdbcType specifies a specificJdbcType implementation to use while@JdbcTypeCodespecifies a "code" that is then resolved against theJdbcTypeRegistry.

The "type code" relative to a |

Customizing theJdbcTypeRegistry can be accomplished through@JdbcTypeRegistration andTypeContributor. SeeRegistries for discussion ofJavaTypeRegistry.SeeTypeContributor for discussion ofTypeContributor.

See the@JdbcTypeCode Javadoc for details.

SeeResolving the composition for a discussion of the process used to resolve themapping composition.

MutabilityPlan

MutabilityPlan is the means by which Hibernate understands how to deal with the domain value in termsof its internal mutability as well as related concerns such as making copies. While it seems like a minorconcern, it can have a major impact on performance. SeeAttributeConverter Mutability Plan for one case wherethis can manifest. See alsoCase Study : BitSet for another discussion.

TheMutabilityPlan for a mapping can be influenced by any of the following annotations:

@Mutability@Immutable@MapKeyMutability@CollectionIdMutability

Hibernate checks the following places for@Mutability and@Immutable, in order of precedence:

Local to the mapping

On the associated

AttributeConverterimplementation class (if one)On the value’s Java type

In most cases, the fallback defined byJavaType#getMutabilityPlan is the proper strategy.

Hibernate usesMutabilityPlan to:

Check whether a value is considered dirty

Make deep copies

Marshal values to and from the second-level cache

Generally speaking, immutable values perform better in all of these cases

To check for dirtiness, Hibernate just needs to check object identity (

==) as opposedto equality (Object#equals).The same value instance can be used as the deep copy of itself.

The same value can be used from the second-level cache as well as the value we put into thesecond-level cache.

If a particular Java type is considered mutable (aDate e.g.),@Immutable or a immutable-specificMutabilityPlan implementation can be specified to have Hibernate treat the value as immutable. Thisalso acts as a contract from the application that the internal state of these objects is not changedby the application. Specifying that a mutable type is immutable and then changing the internal statewill lead to problems; so only do this if the application unequivocally does not change the internalstate.

SeeResolving the composition for a discussion of the process used to resolve themapping composition.

BasicValueConverter

BasicValueConverter is roughly analogous toAttributeConverter in that it describes a conversion tohappen when reading or writing values of a basic-valued model part. In fact, internally Hibernate wrapsan appliedAttributeConverter in aBasicValueConverter. It also applies implicitBasicValueConverterconverters in certain cases such as enum handling, etc.

Hibernate does not provide an explicit facility to influence these conversions beyondAttributeConverter.SeeAttributeConverters.

SeeResolving the composition for a discussion of the process used to resolve themapping composition.

Resolving the composition

Using this composition approach, Hibernate will need to resolve certain parts of this mapping. Oftenthis involves "filling in the blanks" as it will be configured for just parts of the mapping. This sectionoutlines how this resolution happens.

This is a complicated process and is only covered at a high level for the most common cases here. For the full specifics, consult the source code for |

First, we look for a custom type. If found, this takes predence. SeeCustom type mapping for details

If anAttributeConverter is applied, we use it as the basis for the resolution

If

@JavaTypeis also used, that specificJavaTypeis used for the converter’s "domain type". Otherwise,the Java type defined by the converter as its "domain type" is resolved against theJavaTypeRegistryIf

@JdbcTypeor@JdbcTypeCodeis used, the indicatedJdbcTypeis used and the converted "relational Javatype" is determined byJdbcType#getJdbcRecommendedJavaTypeMapping. Otherwise, the Java type defined by theconverter as its relational type is used and theJdbcTypeis determined byJdbcType#getRecommendedJdbcTypeThe

MutabilityPlancan be specified using@Mutabilityor@Immutableon theAttributeConverterimplementation,the basic value mapping or the Java type used as the domain-type. Otherwise,JdbcType#getJdbcRecommendedJavaTypeMappingfor the conversion’s domain-type is used to determine the mutability-plan.

Next we try to resolve theJavaType to use for the mapping. We check for an explicit@JavaType and use the specifiedJavaType if found. Next any "implicit" indication is checked; for example, the index for a List has the implicit Java typeofInteger. Next, we use reflection if possible. If we are unable to determine theJavaType to use through the preceedingsteps, we try to resolve an explicitly specifiedJdbcType to use and, if found, use itsJdbcType#getJdbcRecommendedJavaTypeMapping as the mapping’sJavaType. If we are not able to determine theJavaType by this point, an error is thrown.

TheJavaType resolved earlier is then inspected for a number of special cases.

For enum values, we check for an explicit

@Enumeratedand create an enumeration mapping. Note that this resolutionstill uses any explicitJdbcTypeindicatorsFor temporal values, we check for

@Temporaland create an enumeration mapping. Note that this resolutionstill uses any explicitJdbcTypeindicators; this includes@JdbcTypeand@JdbcTypeCode, as well as@TimeZoneStorageand@TimeZoneColumnif appropriate.

The fallback at this point is to use theJavaType andJdbcType determined in earlier steps to create aJDBC-mapping (which encapsulates theJavaType andJdbcType) and combines it with the resolvedMutabilityPlan

When using the compositional approach, there are other ways to influence the resolution as coveredinEnums,Handling temporal data,Handling LOB data andHandling nationalized character data

SeeTypeContributor for an alternative to@JavaTypeRegistration and@JdbcTypeRegistration.

3.2.45. Custom type mapping

Another approach is to supply the implementation of theorg.hibernate.usertype.UserType contract using@Type.

There are also corresponding, specialized forms of@Type for specific model parts:

When mapping a Map,

@Typedescribes the Map value while@MapKeyTypedescribe the Map keyWhen mapping an id-bag,

@Typedescribes the elements while@CollectionIdTypedescribes the collection-idFor other collection mappings,

@Typedescribes the elementsFor discriminated association mappings (

@Anyand@ManyToAny),@Typedescribes the discriminator value

@Type allows for more complex mapping concerns; but,AttributeConverter andCompositional basic mapping should generally be preferred as simpler solutions

3.2.46. Handling nationalized character data

How nationalized character data is handled and stored depends on the underlying database.

Most databases support storing nationalized character data through the standardized SQLNCHAR, NVARCHAR, LONGNVARCHAR and NCLOB variants.

Others support storing nationalized data as part of CHAR, VARCHAR, LONGVARCHARand CLOB. Generally these databases do not support NCHAR, NVARCHAR, LONGNVARCHARand NCLOB, even as aliased types.

Ultimately Hibernate understands this throughDialect#getNationalizationSupport()

To ensure nationalized character data gets stored and accessed correctly,@Nationalizedcan be used locally orhibernate.use_nationalized_character_data can be set globally.

|

For databases with no See alsoHandling LOB data regarding similar limitation for databases which do not supportexplicit |

Considering we have the following database table:

NVARCHAR - SQLCREATETABLEProduct(idINTEGERNOTNULL,nameVARCHAR(255),warrantyNVARCHAR(255),PRIMARYKEY(id))To map a specific attribute to a nationalized variant data type, Hibernate defines the@Nationalized annotation.

NVARCHAR mapping@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateStringname;@NationalizedprivateStringwarranty;//Getters and setters are omitted for brevity}3.2.47. Handling LOB data

The@Lob annotation specifies that character or binary data should be written to the databaseusing the special JDBC APIs for handling database LOB (Large OBject) types.

How JDBC deals with Some database drivers (i.e. PostgreSQL) are especially problematic and in such cases you mighthave to do some extra work to get LOBs functioning. But that’s beyond the scope of this guide. |

For databases with no |

There’s two ways a LOB may be represented in the Java domain model:

using a special JDBC-definedLOB locator type, or

using a regular "materialized" type like

String,char[], orbyte[].

LOB Locator

The JDBC LOB locator types are:

java.sql.Blobjava.sql.Clobjava.sql.NClob

These types represent references to off-table LOB data.In principle, they allow JDBC drivers to support more efficient access to the LOB data.Some drivers stream parts of the LOB data as needed, potentially consuming less memory.

However,java.sql.Blob andjava.sql.Clob can be unnatural to deal with and suffercertain limitations.For example, it’s not portable to access a LOB locator after the end of the transactionin which it was obtained.

Materialized LOB

Alternatively, Hibernate lets you access LOB data via the familiar Java typesString,char[], andbyte[]. But of course this requires materializing the entire contentsof the LOB in memory when the object is first retrieved. Whether this performance costis acceptable depends on many factors, including the vagaries of the JDBC driver.

You don’t need to use a |

3.2.48. Handling temporal data

Hibernate supports mapping temporal values in numerous ways, though ultimately these strategiesboil down to the 3 main Date/Time types defined by the SQL specification:

- DATE

Represents a calendar date by storing years, months and days.

- TIME

Represents the time of a day by storing hours, minutes and seconds.

- TIMESTAMP

Represents both a DATE and a TIME plus nanoseconds.

- TIMESTAMP WITH TIME ZONE

Represents both a DATE and a TIME plus nanoseconds and zone id or offset.

The mapping ofjava.time temporal types to the specific SQL Date/Time types is implied as follows:

- DATE

java.time.LocalDate- TIME

java.time.LocalTime,java.time.OffsetTime- TIMESTAMP

java.time.Instant,java.time.LocalDateTime,java.time.OffsetDateTimeandjava.time.ZonedDateTime- TIMESTAMP WITH TIME ZONE

java.time.OffsetDateTime,java.time.ZonedDateTime

Although Hibernate recommends the use of thejava.time package for representing temporal values,it does support usingjava.sql.Date,java.sql.Time,java.sql.Timestamp,java.util.Date andjava.util.Calendar.

The mappings forjava.sql.Date,java.sql.Time,java.sql.Timestamp are implicit:

- DATE

java.sql.Date- TIME

java.sql.Time- TIMESTAMP

java.sql.Timestamp

Applying |

When usingjava.util.Date orjava.util.Calendar, Hibernate assumesTIMESTAMP. To alter that,use@Temporal.

// mapped as TIMESTAMP by defaultDatedateAsTimestamp;// explicitly mapped as DATE@Temporal(TemporalType.DATE)DatedateAsDate;// explicitly mapped as TIME@Temporal(TemporalType.TIME)DatedateAsTime;Using a specific time zone

By default, Hibernate is going to use thePreparedStatement.setTimestamp(int parameterIndex, java.sql.Timestamp) orPreparedStatement.setTime(int parameterIndex, java.sql.Time x) when saving ajava.sql.Timestamp or ajava.sql.Time property.

When the time zone is not specified, the JDBC driver is going to use the underlying JVM default time zone, which might not be suitable if the application is used from all across the globe.For this reason, it is very common to use a single reference time zone (e.g. UTC) whenever saving/loading data from the database.

One alternative would be to configure all JVMs to use the reference time zone:

- Declaratively

java-Duser.timezone=UTC...- Programmatically

TimeZone.setDefault(TimeZone.getTimeZone("UTC"));

However, as explained inthis article, this is not always practical, especially for front-end nodes.For this reason, Hibernate offers thehibernate.jdbc.time_zone configuration property which can be configured:

- Declaratively, at the

SessionFactorylevel settings.put(AvailableSettings.JDBC_TIME_ZONE,TimeZone.getTimeZone("UTC"));- Programmatically, on a per

Sessionbasis Sessionsession=sessionFactory().withOptions().jdbcTimeZone(TimeZone.getTimeZone("UTC")).openSession();

With this configuration property in place, Hibernate is going to call thePreparedStatement.setTimestamp(int parameterIndex, java.sql.Timestamp, Calendar cal) orPreparedStatement.setTime(int parameterIndex, java.sql.Time x, Calendar cal), where thejava.util.Calendar references the time zone provided via thehibernate.jdbc.time_zone property.

Handling time zoned temporal data

By default, Hibernate will convert and normalizeOffsetDateTime andZonedDateTime tojava.sql.Timestamp in UTC.This behavior can be altered by configuring thehibernate.timezone.default_storage property

settings.put(AvailableSettings.TIMEZONE_DEFAULT_STORAGE,TimeZoneStorageType.AUTO);Other possible storage types areAUTO,COLUMN,NATIVE andNORMALIZE (the default).WithCOLUMN, Hibernate will save the time zone information into a dedicated column,whereasNATIVE will require the support of database for aTIMESTAMP WITH TIME ZONE data typethat retains the time zone information.NORMALIZE doesn’t store time zone information and will simply convert the timestamp to UTC.Hibernate understands what a database/dialect supports throughDialect#getTimeZoneSupportand will abort with a boot error if theNATIVE is used in conjunction with a database that doesn’t support this.ForAUTO, Hibernate tries to useNATIVE if possible and falls back toCOLUMN otherwise.

3.2.49.@TimeZoneStorage

Hibernate supports defining the storage to use for time zone information for individual propertiesvia the@TimeZoneStorage and@TimeZoneColumn annotations.The storage type can be specified via the@TimeZoneStorage by specifying aorg.hibernate.annotations.TimeZoneStorageType.The default storage type isAUTO which will ensure that the time zone information is retained.The@TimeZoneColumn annotation can be used in conjunction withAUTO orCOLUMN and allows to definethe column details for the time zone information storage.

Storing the zone offset might be problematic for future timestamps as zone rules can change.Due to this, storing the offset is only safe for past timestamps, and we advise sticking to the |

@TimeZoneColumn usage@TimeZoneStorage(TimeZoneStorageType.COLUMN)@TimeZoneColumn(name="birthtime_offset_offset")@Column(name="birthtime_offset")privateOffsetTimeoffsetTimeColumn;@TimeZoneStorage(TimeZoneStorageType.COLUMN)@TimeZoneColumn(name="birthday_offset_offset")@Column(name="birthday_offset")privateOffsetDateTimeoffsetDateTimeColumn;@TimeZoneStorage(TimeZoneStorageType.COLUMN)@TimeZoneColumn(name="birthday_zoned_offset")@Column(name="birthday_zoned")privateZonedDateTimezonedDateTimeColumn;3.2.50. AttributeConverters

With a customAttributeConverter, the application developer can map a given JDBC type to an entity basic type.

In the following example, thejava.time.Period is going to be mapped to aVARCHAR database column.

java.time.Period customAttributeConverter@ConverterpublicclassPeriodStringConverterimplementsAttributeConverter<Period,String>{@OverridepublicStringconvertToDatabaseColumn(Periodattribute){returnattribute.toString();}@OverridepublicPeriodconvertToEntityAttribute(StringdbData){returnPeriod.parse(dbData);}}To make use of this custom converter, the@Convert annotation must decorate the entity attribute.

java.time.PeriodAttributeConverter mapping@Entity(name="Event")publicstaticclassEvent{@Id@GeneratedValueprivateLongid;@Convert(converter=PeriodStringConverter.class)@Column(columnDefinition="")privatePeriodspan;//Getters and setters are omitted for brevity}When persisting such entity, Hibernate will do the type conversion based on theAttributeConverter logic:

AttributeConverterINSERTINTOEvent(span,id)VALUES('P1Y2M3D',1)AnAttributeConverter can be applied globally for (@Converter( autoApply=true )) or locally.

AttributeConverter Java and JDBC types

In cases when the Java type specified for the "database side" of the conversion (the secondAttributeConverter bind parameter) is not known,Hibernate will fallback to ajava.io.Serializable type.

If the Java type is not known to Hibernate, you will encounter the following message:

HHH000481: Encountered Java type for which we could not locate a JavaType and which does not appear to implement equals and/or hashCode.This can lead to significant performance problems when performing equality/dirty checking involving this Java type.Consider registering a custom JavaType or at least implementing equals/hashCode.

A Java type is "known" if it has an entry in theJavaTypeRegistry. While Hibernate does load many JDK types intotheJavaTypeRegistry, an application can also expand theJavaTypeRegistry by adding newJavaTypeentries as discussed inCompositional basic mapping andTypeContributor.

Mapping an AttributeConverter using HBM mappings

When using HBM mappings, you can still make use of the Jakarta PersistenceAttributeConverter because Hibernate supportssuch mapping via thetype attribute as demonstrated by the following example.

Let’s consider we have an application-specificMoney type:

Money typepublicclassMoney{privatelongcents;publicMoney(longcents){this.cents=cents;}publiclonggetCents(){returncents;}publicvoidsetCents(longcents){this.cents=cents;}}Now, we want to use theMoney type when mapping theAccount entity:

Account entity using theMoney typepublicclassAccount{privateLongid;privateStringowner;privateMoneybalance;//Getters and setters are omitted for brevity}Since Hibernate has no knowledge how to persist theMoney type, we could use a Jakarta PersistenceAttributeConverterto transform theMoney type as aLong. For this purpose, we are going to use the followingMoneyConverter utility:

MoneyConverter implementing the Jakarta PersistenceAttributeConverter interfacepublicclassMoneyConverterimplementsAttributeConverter<Money,Long>{@OverridepublicLongconvertToDatabaseColumn(Moneyattribute){returnattribute==null?null:attribute.getCents();}@OverridepublicMoneyconvertToEntityAttribute(LongdbData){returndbData==null?null:newMoney(dbData);}}To map theMoneyConverter using HBM configuration files you need to use theconverted:: prefix in thetypeattribute of theproperty element.

AttributeConverter<?xmlversion="1.0"?><!--~SPDX-License-Identifier:Apache-2.0~CopyrightRedHatInc.andHibernateAuthors--><!DOCTYPEhibernate-mappingPUBLIC"-//Hibernate/Hibernate Mapping DTD 3.0//EN""http://www.hibernate.org/dtd/hibernate-mapping-3.0.dtd"><hibernate-mappingpackage="org.hibernate.orm.test.mapping.converter.hbm"> <class name="org.hibernate.orm.test.mapping.converter.hbm.Account" table="account" > <id name="id"/> <property name="owner"/> <property name="balance" type="converted::org.hibernate.orm.test.mapping.converter.hbm.MoneyConverter"/></class></hibernate-mapping>AttributeConverter Mutability Plan

A basic type that’s converted by a Jakarta PersistenceAttributeConverter is immutable if the underlying Java type is immutableand is mutable if the associated attribute type is mutable as well.

Therefore, mutability is given by theJavaType#getMutabilityPlanof the associated entity attribute type.

This can be adjusted by using@Immutable or@Mutability on any of:

the basic value

the

AttributeConverterclassthe basic value type

SeeMapping basic values for additional details.

Immutable types

If the entity attribute is aString, a primitive wrapper (e.g.Integer,Long), an Enum type, or any other immutableObject type,then you can only change the entity attribute value by reassigning it to a new value.

Considering we have the samePeriod entity attribute as illustrated in theAttributeConverters section:

@Entity(name="Event")publicstaticclassEvent{@Id@GeneratedValueprivateLongid;@Convert(converter=PeriodStringConverter.class)@Column(columnDefinition="")privatePeriodspan;//Getters and setters are omitted for brevity}The only way to change thespan attribute is to reassign it to a different value:

Eventevent=entityManager.createQuery("from Event",Event.class).getSingleResult();event.setSpan(Period.ofYears(3).plusMonths(2).plusDays(1));Mutable types

On the other hand, consider the following example where theMoney type is a mutable.

publicstaticclassMoney{privatelongcents;//Getters and setters are omitted for brevity}@Entity(name="Account")publicstaticclassAccount{@IdprivateLongid;privateStringowner;@Convert(converter=MoneyConverter.class)privateMoneybalance;//Getters and setters are omitted for brevity}publicstaticclassMoneyConverterimplementsAttributeConverter<Money,Long>{@OverridepublicLongconvertToDatabaseColumn(Moneyattribute){returnattribute==null?null:attribute.getCents();}@OverridepublicMoneyconvertToEntityAttribute(LongdbData){returndbData==null?null:newMoney(dbData);}}A mutableObject allows you to modify its internal structure, and Hibernate’s dirty checking mechanism is going to propagate the change to the database:

Accountaccount=entityManager.find(Account.class,1L);account.getBalance().setCents(150*100L);entityManager.persist(account);Although the For this reason, prefer immutable types over mutable ones whenever possible. |

Using the AttributeConverter entity property as a query parameter

Assuming you have the following entity:

Photo entity withAttributeConverter@Entity(name="Photo")publicstaticclassPhoto{@IdprivateIntegerid;@Column(length=256)privateStringname;@Column(length=256)@Convert(converter=CaptionConverter.class)privateCaptioncaption;//Getters and setters are omitted for brevity}And theCaption class looks as follows:

Caption Java objectpublicstaticclassCaption{privateStringtext;publicCaption(Stringtext){this.text=text;}publicStringgetText(){returntext;}publicvoidsetText(Stringtext){this.text=text;}@Overridepublicbooleanequals(Objecto){if(this==o){returntrue;}if(o==null||getClass()!=o.getClass()){returnfalse;}Captioncaption=(Caption)o;returntext!=null?text.equals(caption.text):caption.text==null;}@OverridepublicinthashCode(){returntext!=null?text.hashCode():0;}}And we have anAttributeConverter to handle theCaption Java object:

Caption Java object AttributeConverterpublicstaticclassCaptionConverterimplementsAttributeConverter<Caption,String>{@OverridepublicStringconvertToDatabaseColumn(Captionattribute){returnattribute.getText();}@OverridepublicCaptionconvertToEntityAttribute(StringdbData){returnnewCaption(dbData);}}Traditionally, you could only use the DB dataCaption representation, which in our case is aString, when referencing thecaption entity property.

Caption property using the DB data representationPhotophoto=entityManager.createQuery("select p "+"from Photo p "+"where upper(caption) = upper(:caption) ",Photo.class).setParameter("caption","Nicolae Grigorescu").getSingleResult();In order to use the Java objectCaption representation, you have to get the associated HibernateType.

Caption property using the Java Object representationSessionFactoryImplementorsessionFactory=entityManager.getEntityManagerFactory().unwrap(SessionFactoryImplementor.class);finalMappingMetamodelImplementormappingMetamodel=sessionFactory.getRuntimeMetamodels().getMappingMetamodel();TypecaptionType=mappingMetamodel.getEntityDescriptor(Photo.class).getPropertyType("caption");Photophoto=(Photo)entityManager.createQuery("select p "+"from Photo p "+"where upper(caption) = upper(:caption) ",Photo.class).unwrap(Query.class).setParameter("caption",newCaption("Nicolae Grigorescu"),(BindableType)captionType).getSingleResult();By passing the associated HibernateType, you can use theCaption object when binding the query parameter value.

3.2.51. Registries

We’ve coveredJavaTypeRegistry andJdbcTypeRegistry a few times now, mainly in regards to mapping resolutionas discussed inResolving the composition. But they each also serve additional important roles.

TheJavaTypeRegistry is a registry ofJavaType references keyed by Java type. In addition to mapping resolution,this registry is used to handleClass references exposed in various APIs such asQuery parameter types.JavaType references can be registered through@JavaTypeRegistration.

TheJdbcTypeRegistry is a registry ofJdbcType references keyed by an integer code. As discussed inJdbcType, these type-codes typically match with the corresponding code fromjava.sql.Types, but that is not a requirement - integers other than those defined byjava.sql.Types canbe used. This might be useful for mapping JDBC User Data Types (UDTs) or other specialized database-specifictypes (PostgreSQL’s UUID type, e.g.). In addition to its use in mapping resolution, this registry is also usedas the primary source for resolving "discovered" values in a JDBCResultSet.JdbcType references can beregistered through@JdbcTypeRegistration.

SeeTypeContributor for an alternative to@JavaTypeRegistration and@JdbcTypeRegistration forregistration.

3.2.52. TypeContributor

org.hibernate.boot.model.TypeContributor is a contract for overriding or extending parts of the Hibernate typesystem.

There are many ways to integrate aTypeContributor. The most common is to define theTypeContributor asa Java service (seejava.util.ServiceLoader).

TypeContributor is passed aTypeContributions reference, which allows registration of customJavaType,JdbcType andBasicType references.

WhileTypeContributor still exposes the ability to registerBasicType references, this is considereddeprecated. As of 6.0, theseBasicType registrations are only used while interpretinghbm.xml mappings,which are themselves considered deprecated. UseCustom type mapping orCompositional basic mapping instead.

3.2.53. Case Study : BitSet

We’ve covered many ways to specify basic value mappings so far. This section will look at mapping thejava.util.BitSet type by applying the different techniques covered so far.

@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;privateBitSetbitSet;//Getters and setters are omitted for brevity}As mentioned previously, the worst-case fallback for Hibernate mapping a basic typewhich implementsSerializable is to simply serialize it to the database. BitSetdoes implementSerializable, so by default Hibernate would handle this mapping by serialization.

That is not an ideal mapping. In the following sections we will look at approaches to changevarious aspects of how the BitSet gets mapped to the database.

UsingAttributeConverter

We’ve seen uses ofAttributeConverter previously.

This works well in most cases and is portable across Jakarta Persistence providers.

@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;@Convert(converter=BitSetConverter.class)privateBitSetbitSet;//Getters and setters are omitted for brevity}@Converter(autoApply=true)publicstaticclassBitSetConverterimplementsAttributeConverter<BitSet,String>{@OverridepublicStringconvertToDatabaseColumn(BitSetattribute){returnBitSetHelper.bitSetToString(attribute);}@OverridepublicBitSetconvertToEntityAttribute(StringdbData){returnBitSetHelper.stringToBitSet(dbData);}}The SeeAttributeConverters for details. |

This greatly improves the reading and writing performance of dealing with theseBitSet values because theAttributeConverter does that more efficiently usinga simple externalizable form of the BitSet rather than serializing and deserializingthe values.

See alsoAttributeConverter Mutability Plan.

Using a customJavaTypeDescriptor

As covered in[basic-mapping-explicit], we will define aJavaTypeforBitSet that maps values toVARCHAR for storage by default.

publicclassBitSetJavaTypeextendsAbstractClassJavaType<BitSet>{publicstaticfinalBitSetJavaTypeINSTANCE=newBitSetJavaType();publicBitSetJavaType(){super(BitSet.class);}@OverridepublicMutabilityPlan<BitSet>getMutabilityPlan(){returnBitSetMutabilityPlan.INSTANCE;}@OverridepublicJdbcTypegetRecommendedJdbcType(JdbcTypeIndicatorsindicators){returnindicators.getTypeConfiguration().getJdbcTypeRegistry().getDescriptor(Types.VARCHAR);}@OverridepublicStringtoString(BitSetvalue){returnBitSetHelper.bitSetToString(value);}@OverridepublicBitSetfromString(CharSequencestring){returnBitSetHelper.stringToBitSet(string.toString());}@SuppressWarnings("unchecked")public<X>Xunwrap(BitSetvalue,Class<X>type,WrapperOptionsoptions){if(value==null){returnnull;}if(BitSet.class.isAssignableFrom(type)){return(X)value;}if(String.class.isAssignableFrom(type)){return(X)toString(value);}if(type.isArray()){if(type.getComponentType()==byte.class){return(X)value.toByteArray();}}throwunknownUnwrap(type);}public<X>BitSetwrap(Xvalue,WrapperOptionsoptions){if(value==null){returnnull;}if(valueinstanceofCharSequence){returnfromString((CharSequence)value);}if(valueinstanceofBitSet){return(BitSet)value;}throwunknownWrap(value.getClass());}}We can either apply that type locally using@JavaType

@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;@JavaType(BitSetJavaType.class)privateBitSetbitSet;//Constructors, getters, and setters are omitted for brevity}Or we can apply it globally using@JavaTypeRegistration. This allows the registeredJavaTypeto be used as the default whenever we encounter theBitSet type

@Entity(name="Product")@JavaTypeRegistration(javaType=BitSet.class,descriptorClass=BitSetJavaType.class)publicstaticclassProduct{@IdprivateIntegerid;privateBitSetbitSet;//Constructors, getters, and setters are omitted for brevity}Selecting differentJdbcTypeDescriptor

Our customBitSetJavaType mapsBitSet values toVARCHAR by default. That was a better optionthan direct serialization. But asBitSet is ultimately binary data we would probably really want tomap this toVARBINARY type instead. One way to do that would be to changeBitSetJavaType#getRecommendedJdbcTypeto instead returnVARBINARY descriptor. Another option would be to use a local@JdbcType or@JdbcTypeCode.

The following examples for specifying theJdbcType assume ourBitSetJavaTypeis globally registered.

We will again store the values asVARBINARY in the database. The difference now however is thatthe coercion methods#wrap and#unwrap will be used to prepare the value rather than relying onserialization.

@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;@JdbcTypeCode(Types.VARBINARY)privateBitSetbitSet;//Constructors, getters, and setters are omitted for brevity}In this example,@JdbcTypeCode has been used to indicate that theJdbcType registered for JDBC’sVARBINARY type should be used.

@Entity(name="Product")publicstaticclassProduct{@IdprivateIntegerid;@JdbcType(CustomBinaryJdbcType.class)privateBitSetbitSet;//Constructors, getters, and setters are omitted for brevity}In this example,@JdbcType has been used to specify our customBitSetJdbcType descriptor locally forthis attribute.

We could instead replace how Hibernate deals with allVARBINARY handling with our custom impl using@JdbcTypeRegistration

@Entity(name="Product")@JdbcTypeRegistration(CustomBinaryJdbcType.class)publicstaticclassProduct{@IdprivateIntegerid;privateBitSetbitSet;//Constructors, getters, and setters are omitted for brevity}3.2.54. SQL quoted identifiers

You can force Hibernate to quote an identifier in the generated SQL by enclosing the table or column name in backticks in the mapping document.While traditionally, Hibernate used backticks for escaping SQL reserved keywords, Jakarta Persistence uses double quotes instead.

Once the reserved keywords are escaped, Hibernate will use the correct quotation style for the SQLDialect.This is usually double quotes, but SQL Server uses brackets and MySQL uses backticks.

@Entity(name="Product")publicstaticclassProduct{@IdprivateLongid;@Column(name="`name`")privateStringname;@Column(name="`number`")privateStringnumber;//Getters and setters are omitted for brevity}@Entity(name="Product")publicstaticclassProduct{@IdprivateLongid;@Column(name="\"name\"")privateStringname;@Column(name="\"number\"")privateStringnumber;//Getters and setters are omitted for brevity}Becausename andnumber are reserved words, theProduct entity mapping uses backticks to quote these column names.

When saving the followingProduct entity, Hibernate generates the following SQL insert statement:

Productproduct=newProduct();product.setId(1L);product.setName("Mobile phone");product.setNumber("123-456-7890");entityManager.persist(product);INSERT INTO Product ("name", "number", id)VALUES ('Mobile phone', '123-456-7890', 1)Global quoting

Hibernate can also quote all identifiers (e.g. table, columns) using the following configuration property:

<propertyname="hibernate.globally_quoted_identifiers"value="true"/>This way, we don’t need to manually quote any identifier:

@Entity(name="Product")publicstaticclassProduct{@IdprivateLongid;privateStringname;privateStringnumber;//Getters and setters are omitted for brevity}When persisting aProduct entity, Hibernate is going to quote all identifiers as in the following example:

INSERT INTO "Product" ("name", "number", "id")VALUES ('Mobile phone', '123-456-7890', 1)As you can see, both the table name and all the column have been quoted.

For more about quoting-related configuration properties, check out theMapping configurations section as well.

3.2.55. Generated properties

- NOTE

This section talks about generating values for non-identifier attributes. For discussion of generated identifier values, seeGenerated identifier values.

Generated attributes have their values generated as part of performing a SQL INSERT or UPDATE. Applications can generate thesevalues in any number of ways (SQL DEFAULT value, trigger, etc). Typically, the application needs to refresh objects thatcontain any properties for which the database was generating values, which is a major drawback.

Applications can also delegate generation to Hibernate, in which case Hibernate will manage the value generationand (potential[3]) state refresh itself.

Only Generated attributes must additionally benon-insertable andnon-updateable. |