Introduction to architecting systems for scale.

Few computer science or software development programsattempt to teach the building blocks of scalable systems.Instead, system architecture is usually picked up on the job byworking through the pain of a growing productor by working with engineers who have already learnedthrough that suffering process.

In this post I’ll attempt to document some of thescalability architecture lessons I’ve learned while working onsystems atYahoo! andDigg.

I’ve attempted to maintain a color convention for diagrams:

- green is an external request from an externalclient (an HTTP request from a browser, etc),

- blue is your code running in some container(a Django app running onmod_wsgi,a Python script listening toRabbitMQ, etc), and

- red is a piece of infrastructure (MySQL,Redis, RabbitMQ, etc).

Load balancing

The ideal system increases capacity linearly with adding hardware.In such a system, if you have one machine and add another, your capacity would double.If you had three and you add another, your capacity would increase by 33%.Let’s call thishorizontal scalability.

On the failure side, an ideal system isn’t disrupted by the loss of a server.Losing a server should simply decrease system capacity by the same amount it increasedoverall capacity when it was added. Let’s call thisredundancy.

Both horizontal scalability and redundancy are usually achieved via load balancing.

(This article won’t addressvertical scalability,as it is usually an undesirable property for a large system, as there is inevitably a pointwhere it becomes cheaper to add capacity in the form on additional machines rather thanadditional resources of one machine, and redundancy and vertical scaling can beat odds with one-another.)

Load balancing is the process of spreading requests across multiple resources accordingto some metric (random, round-robin, random with weighting for machine capacity, etc)and their current status (available for requests, not responding, elevated error rate, etc).

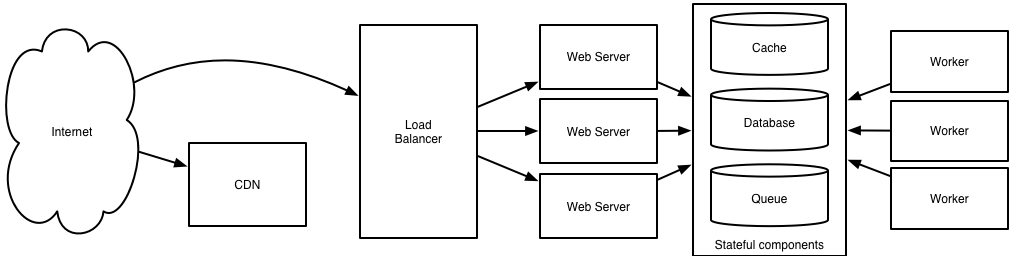

Load needs to be balanced between user requests and your web servers,but must also be balanced at every stage to achieve full scalability and redundancyfor your system. A moderately large system may balance load at three layers:

- user to your web servers,

- web servers to an internal platform layer,

- internal platform layer to your database.

There are a number of ways to implement load balancing.

Smart clients

Adding load-balancing functionality into your database (cache, service, etc) clientis usually an attractive solution for the developer. Is it attractive because it isthe simplest solution? Usually, no. Is it seductive because it is the most robust? Sadly, no.Is it alluring because it’ll be easy to reuse? Tragically, no.

Developers lean towardssmart clients because they are developers, and so they are used to writing software tosolve their problems, and smart clients are software.

With that caveat in mind, what is a smart client? It is a client which takes a poolof service hosts and balances load across them, detects downed hosts and avoidssending requests their way (they also have to detect recovered hosts, deal with addingnew hosts, etc, making them fun to get working decently and a terror to setup).

Hardware load balancers

The most expensive–but very high performance–solution to load balancing is to buy a dedicated hardwareload balancer (something like aCitrix NetScaler). While they can solve a remarkable range of problems,hardware solutions are remarkably expensive, and they are also “non-trivial” to configure.

As such, generally even large companies with substantial budgets will often avoid using dedicated hardware forall their load-balancing needs; instead they use them only as the first point of contact from userrequests to their infrastructure, and use other mechanisms (smart clients or the hybrid approach discussedin the next section) for load-balancing for traffic within their network.

Software load balancers

If you want to avoid the pain of creating a smart client,and purchasing dedicated hardware is excessive,then the universe has been kind enough to provide a hybrid: software load-balancers.

HAProxy is a great example of this approach.It runs locally on each of your boxes, and each service you want to load-balancehas a locally bound port. For example, you might have your platform machines accessiblevialocalhost:9000, your database read-pool atlocalhost:9001 and your databasewrite-pool atlocalhost:9002. HAProxy manages healthchecks and will remove and returnmachines to those pools according to your configuration, as well as balancing across allthe machines in those pools as well.

For most systems, I’d recommend starting with a software load balancer and moving tosmart clients or hardware load balancing only with deliberate need.

Caching

Load balancing helps you scale horizontally across an ever-increasingnumber of servers, but caching will enable you to make vastly betteruse of the resources you already have, as well as making otherwiseunattainable product requirements feasible.

Caching consists of: precalculating results (e.g. the number of visits from each referring domain for the previous day),pre-generating expensive indexes (e.g. suggested stories based on a user’s click history), andstoring copies of frequently accessed data in a faster backend (e.g.Memcache instead ofPostgreSQL.

In practice, caching is important earlier in the development process than load-balancing,and starting with a consistent caching strategy will save you time later on.It also ensures you don’t optimizeaccess patterns which can’t be replicated with your caching mechanism or access patterns where performance becomesunimportant after the addition of caching(I’ve found that many heavily optimizedCassandra applicationsare a challenge to cleanly add caching to if/when the database’s caching strategy can’tbe applied to your access patterns, as the datamodel is generally inconsistent between the Cassandraand your cache).

Application vs. database caching

There are two primary approaches to caching: application caching anddatabase caching (most systems rely heavily on both).

Application caching requires explicit integration in the application code itself.Usually it will check if a value is in the cache; if not, retrieve the value fromthe database; then write that value into the cache (this value is especiallycommon if you are using a cache which observes theleast recently used caching algorithm).The code typically looks like (specifically this is aread-through cache, as itreads the value from the database into the cache if it is missing from the cache):

key = "user.%s" % user_iduser_blob = memcache.get(key)if user_blob is None: user = mysql.query("SELECT * FROM users WHERE user_id=\"%s\"", user_id) if user: memcache.set(key, json.dumps(user)) return userelse: return json.loads(user_blob)The other side of the coin is database caching.

When you flip your database on, you’re going to get some levelof default configuration which will provide some degree of caching and performance.Those initial settings will be optimized for a generic usecase,and by tweaking them to your system’s access patterns you can generallysqueeze a great deal of performance improvement.

The beauty of database caching is that your application code gets faster “forfree”, and a talented DBA or operational engineer can uncoverquite a bit of performance without your code changing a whit(my colleague Rob Coli spent some time recently optimizing our configuration for Cassandrarow caches, and was succcessful to the extent that he spent a week harassing us with graphsshowing the I/O load dropping dramatically and request latenciesimproving substantially as well).

In-memory caches

The most potent–in terms of raw performance–caches you’ll encounter are those which storetheir entire set of data in memory.Memcached andRedis are both examples of in-memory caches (caveat: Redis can be configuredto store some data to disk).This is because accesses to RAM areorders of magnitudefaster than those to disk.

On the other hand, you’ll generally have far less RAM available than disk space, so you’llneed a strategy for only keeping the hot subset of your data in your memory cache. The moststraightforward strategy isleast recently used, and is employed by Memcache (and Redis as of 2.2 canbe configured to employ it as well). LRU works by evicting less commonly used data in preference ofmore frequently used data, and is almost always an appropriate caching strategy.

Content distribution networks

A particular kind of cache (some might argue with this usage of theterm, but I find it fitting) which comes into play for sites serving large amountsof static media is thecontent distribution network.

CDNs take the burden of serving static media off of your application servers (which aretypically optimzed for serving dynamic pages rather than static media), andprovide geographic distribution. Overall, your static assets will load more quicklyand with less strain on your servers (but a new strain of business expense).

In a typical CDN setup, a request will first ask your CDN for a piece of static media, theCDN will serve that content if it has it locally available (HTTP headers are used for configuringhow the CDN caches a given piece of content). If it isn’t available, the CDN will query yourservers for the file and then cache it locally and serve it to the requesting user (in thisconfiguration they are acting as a read-through cache).

If your site isn’t yet large enough to merit its own CDN, you can ease a future transitionby serving your static media off a separate subdomain (e.g.static.example.com) usinga lightweight HTTP server likeNginx, and cutover the DNS fromyour servers to a CDN at a later date.

Cache invalidation

While caching is fantastic, it does require you to maintain consistency between yourcaches and the source of truth (i.e. your database), at risk of truly bizarre applicaiton behavior.

Solving this problem is known ascache invalidation.

If you’re dealing with a single datacenter, it tends to be a straightforwardproblem, but it’s easy to introduce errors if you have multiple codepaths writing to yourdatabase and cache (which is almost always going to happen if you don’t go into writing the applicationwith a caching strategy already in mind). At a high level, the solution is: each time a value changes,write the new value into the cache (this is called awrite-through cache)or simply delete the current value from the cache and allow a read-through cache to populate it later(choosing between read and write through caches depends on your application’s details,but generally I prefer write-through caches as they reduce likelihood of a stampedeon your backend database).

Invalidation becomes meaningfully more challenging for scenarios involving fuzzy queries (e.g if youare trying to add application level caching in-front of a full-text search engine likeSOLR), or modifications to unknown number of elements(e.g. deleting all objects created more than a week ago).

In those scenarios you have to consider relying fully on database caching, adding aggressiveexpirations to the cached data, or reworking your application’s logic to avoid the issue(e.g. instead ofDELETE FROM a WHERE..., retrieve all the items which match the criteria,invalidate the corresponding cache rows and then delete the rows by their primary key explicitly).

Off-line processing

As a system grows more complex, it is almost always necessary to perform processingwhich can’t be performed in-line with a client’s request either because it createsunacceptable latency (e.g. you want to want to propagate a user’s action across asocial graph) or because it needs to occur periodically (e.g. want to create daily rollupsof analytics).

Message queues

For processing you’d like to perform inline with a request but is too slow,the easiest solution is to create a message queue (for example,RabbitMQ).Message queues allow your web applications to quickly publish messages to the queue,and have other consumers processes perform the processing outside the scope and timelineof the client request.

Dividing work between off-line work handled by a consumer and in-line work done bythe web application depends entirely on the interface you are exposing to your users.Generally you’ll either:

- perform almost no work in the consumer (merely scheduling a task)and inform your user that the task will occur offline, usually with a polling mechanismto update the interface once the task is complete(for example, provisioning a new VM on Slicehost follows this pattern), or

- perform enough work in-line to make it appear to the user that the task has completed,and tie up hanging ends afterwards (posting a message on Twitter or Facebook likelyfollow this pattern by updating the tweet/message in your timeline but updatingyour followers’ timelines out of band; it’s simple isn’t feasible to update allthe followers for aScobleizerin real-time).

Message queues have another benefit, which is that they allow youto create a separate machine pool for performing off-line processingrather than burdening your web application servers. This allows youto target increases in resources to your current performance or throughput bottleneckrather than uniformly increasing resources across the bottleneck and non-bottlenecksystems.

Scheduling periodic tasks

Almost all large systems require daily or hourly tasks,but unfortunately this seems to still be a problem waitingfor a widely accepted solution which easily supports redundancy.In the meantime you’re probably still stuck withcron,but you could use the cronjobs to publish messages to a consumer,which would mean that the cron machine is only responsible for schedulingrather than needing to perform all the processing.

Does anyone know of recognized tools which solve this problem?I’ve seen many homebrew systems, but nothing clean and reusable.Sure, you can store the cronjobs in aPuppetconfig for a machine, which makes recovering from losing that machineeasy, but it would still require a manual recovery, which is likelyacceptable but not perfect.

Map-reduce

If your large scale application is dealing with a large quantity of data,at some point you’re likely to add support formap-reduce,probably usingHadoop, and maybeHive orHBase.

Adding a map-reduce layer makes it possible to perform data and/or processingintensive operations in a reasonable amount of time. You might use it for calculatingsuggested users in a social graph, or for generating analytics reports.

For sufficiently small systems you can often get away with adhoc querieson a SQL database, but that approach may not scale up trivially once thequantity of data stored or write-load requires sharding your database,and will usually require dedicated slaves for the purpose of performingthese queries (at which point, maybe you’d rather use a system designedfor analyzing large quantities of data, rather than fighting your database).

Platform layer

Most applications start out with a web application communicatingdirectly with a database. This approach tends to be sufficientfor most applications, but there are some compelling reasonsfor adding a platform layer, such that your web applications communicatewith a platform layer which in turn communicates with your databases.

First, separating the platform and web application allow you to scalethe pieces independently. If you add a new API, you can add platform serverswithout adding unnecessary capacity for your web application tier.(Generally, specializing your servers’ role opens up an additional levelof configuration optimization which isn’t available for general purposemachines; your database machine will usually have a high I/O load and willbenefit from a solid-state drive, but your well-configured application server probablyisn’t reading from disk at all during normal operation, but might benefit frommore CPU.)

Second, adding a platform layer can be a way to reuse your infrastructure for multipleproducts or interfaces (a web application, an API, an iPhone app, etc) without writingtoo much redundant boilerplate code for dealing with caches, databases, etc.

Third, a sometimes underappreciated aspect of platform layers is that theymake it easier to scale an organization. At their best, a platform exposes a crisp product-agnosticinterface which masks implementation details.If done well, this allows multiple independent teams to develop utilizing the platform’scapabilities, as well as another team implementing/optimizing the platform itself.

I had intended to go into moderate detail on handling multiple data-centers, butthat topic truly deserves its own post, so I’ll only mention that cacheinvalidation and data replication/consistency become rather interesting problemsat that stage.

I’m sure I’ve made some controversial statements in this post,which I hope the dear reader will argue with such that we canboth learn a bit. Thanks for reading!

Hi folks. I'mWill Larson.

If you're looking to reach out to me, here areways I help.If you'd like to get a email from me, subscribe tomy weekly newsletter.

I wroteAn Elegant Puzzle,Staff Engineer,The Engineering Executive's Primer, andCrafting Engineering Strategy.

Popular

Recent