- Notifications

You must be signed in to change notification settings - Fork0

This repository contains the final project of my Data Science course. The project involved developing a machine learning model that predicts the cost of public sector projects in the UK. The focus is on analyzing historical data and developing a model to predict future costs.

License

rauschgold/ds-bootcamp-capstone-project

Folders and files

| Name | Name | Last commit message | Last commit date | |

|---|---|---|---|---|

Repository files navigation

Here you find a Skeleton project for building a simple model in a python script or notebook and log the results on MLFlow.

There are two ways to do it:

In Jupyter Notebooks:We train a simple model in thejupyter notebook, where we select only some features and do minimal cleaning. The hyperparameters of feature engineering and modeling will be logged with MLflow

With Python scripts:Themain script will go through exactly the same process as the jupyter notebook and also log the hyperparameters with MLflow

Data used is thecoffee quality dataset.

- pyenv with Python: 3.11.3

Use the requirements file in this repo to create a new environment.

make setup#orpyenvlocal 3.11.3python -m venv .venvsource .venv/bin/activatepip install --upgrade pippip install -r requirements_dev.txt

Therequirements.txt file contains the libraries needed for deployment.. of model or dashboard .. thus no jupyter or other libs used during development.

The MLFLOW URI shouldnot be stored on git, you have two options, to save it locally in the.mlflow_uri file:

echo http://127.0.0.1:5000/> .mlflow_uri

This will create a local file where the uri is stored which will not be added on github (.mlflow_uri is in the.gitignore file). Alternatively you can export it as an environment variable with

export MLFLOW_URI=http://127.0.0.1:5000/This links to your local mlflow, if you want to use a different one, then change the set uri.

The code in theconfig.py will try to read it locally and if the file doesn't exist will look in the env var.. IF that is not set the URI will be empty in your code.

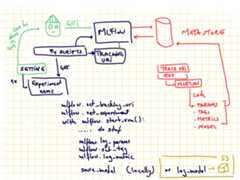

You can do it via the GUI or viacommand line if you use the local mlflow:

mlflow experiments create --experiment-name 0-template-ds-modeling

Check your local mlflow

mlflow ui

and open the linkhttp://127.0.0.1:5000

This will throw an error if the experiment already exists.Save the experiment name in theconfig file.

In order to train the model and store test data in the data folder and the model in models run:

#activate envsource .venv/bin/activatepython -m modeling.train

In order to test that predict works on a test set you created run:

python modeling/predict.py models/linear data/X_test.csv data/y_test.csv

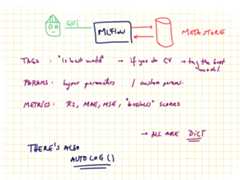

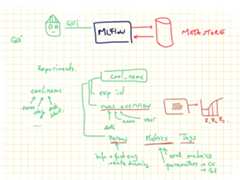

MLFlow is a tool for tracking ML experiments. You can run it locally or remotely. It stores all the information about experiments in a database.And you can see the overview via the GUI or access it via APIs. Sending data to mlflow is done via APIs. And with mlflow you can also store models on S3 where you version them and tag them as production for serving them in production.![]()

You can group model trainings in experiments. The granularity of what an experiment is up to your usecase. Recommended is to have an experiment per data product, as for all the runs in an experiment you can compare the results.

In order to send data about your model you need to set the connection information, via the tracking uri and also the experiment name (otherwise the default one is used). One run represents a model, and all the rest is metadata. For example if you want to save train MSE, test MSE and validation MSE you need to name them as 3 different metrics.If you are doing CV you can set the tracking as nested.

There is no constraint between runs to have the same metadata tracked. I.e. for one run you can track different tags, different metrics, and different parameters (in cv some parameters might not exist for some runs so this .. makes sense to be flexible).

- tags can be anything you want.. like if you do CV you might want to tag the best model as "best"

- params are perfect for hypermeters and also for information about the data pipeline you use, if you scaling vs normalization and so on

- metrics.. should be numeric values as these can get plotted

About

This repository contains the final project of my Data Science course. The project involved developing a machine learning model that predicts the cost of public sector projects in the UK. The focus is on analyzing historical data and developing a model to predict future costs.

Topics

Resources

License

Uh oh!

There was an error while loading.Please reload this page.

Stars

Watchers

Forks

Releases

Packages0

Uh oh!

There was an error while loading.Please reload this page.

Contributors3

Uh oh!

There was an error while loading.Please reload this page.